Welcome to Forge

Forge is a modern, self-hosted web interface for managing popular DevOps tools with enterprise-grade compliance features.

What is Forge?

Forge is a comprehensive automation platform that provides a unified interface for running Ansible playbooks, Terraform/OpenTofu infrastructure code, Packer builds, PowerShell scripts, and more. Built with enterprise compliance in mind, Forge combines powerful automation capabilities with built-in support for DISA STIG compliance, golden image management, and comprehensive security features.

Key Differentiators

- Enterprise Compliance First - Built-in STIG compliance, OpenSCAP scanning, and multiple compliance framework support

- Golden Image Management - 16 pre-built STIG-hardened Packer templates for multi-cloud deployments

- Self-Hosted & Secure - All data, credentials, and logs remain on your infrastructure with HashiCorp Vault integration

- Easy Installation - Linux Service Installer with automated setup, TLS configuration, and dependency management

- Developer-Friendly - Modern web UI, REST API, and support for all popular DevOps tools

Key Features

Infrastructure as Code

Forge supports the full spectrum of infrastructure automation:

- Ansible - Playbook execution with STIG hardening roles and inventory management

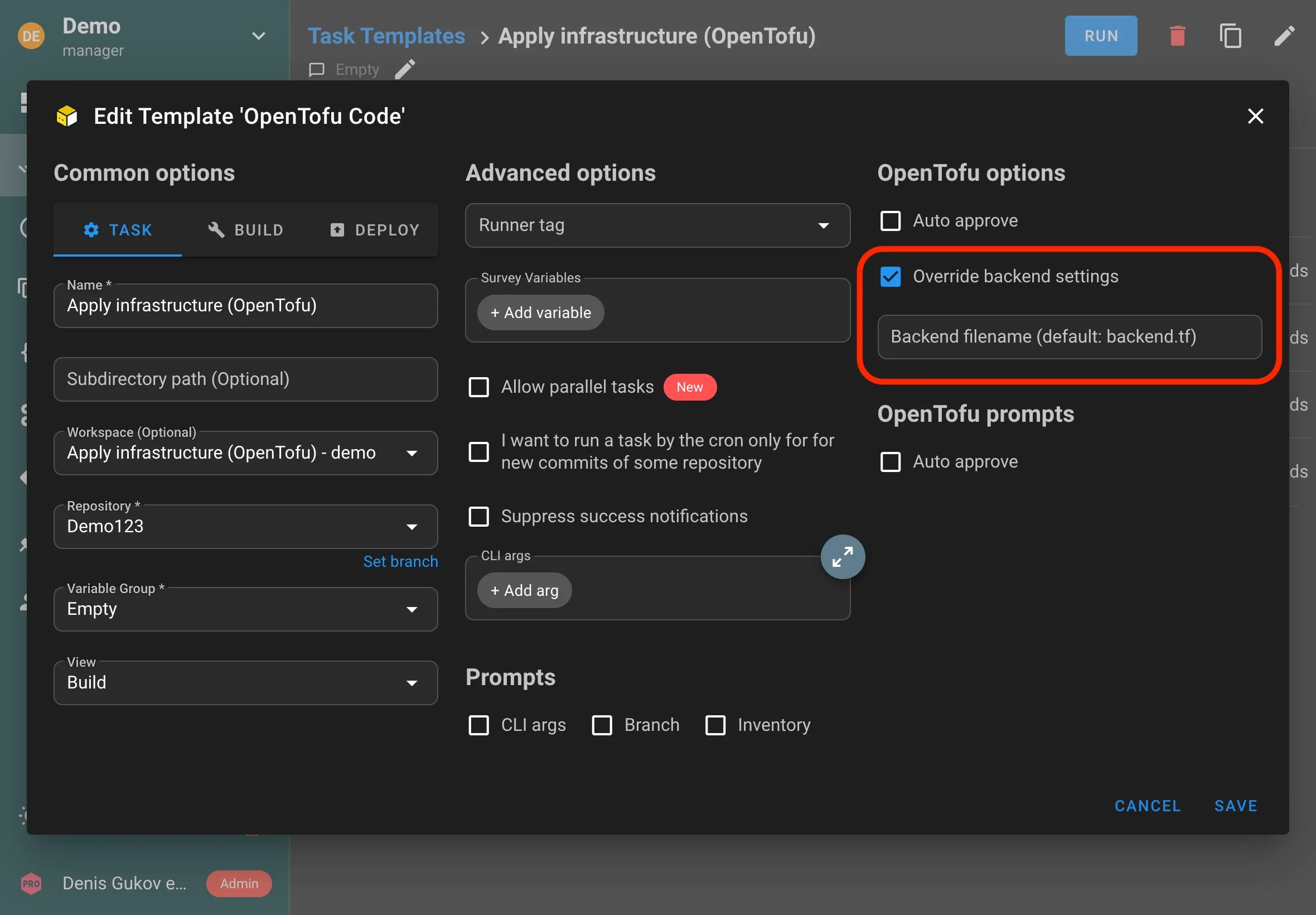

- Terraform/OpenTofu - Infrastructure provisioning with remote state management and workspaces

- Terragrunt - DRY Terraform configurations and multi-environment management

- Terramate - Terraform stack orchestration and drift detection

- Terraformer - Import existing infrastructure from AWS, Azure, GCP, VMware, and Kubernetes

- Pulumi - Modern infrastructure as code with multiple programming languages

- Packer - Build golden images for multiple cloud providers with STIG hardening

- PowerShell & Shell - Execute scripts on Windows and Linux systems

- Python - Run Python scripts and automation workflows

Golden Image Management

Build and manage pre-configured, hardened VM images:

- 16 Pre-Built Templates - Production-ready STIG-hardened templates for RHEL 8/9, Ubuntu 22.04, and Windows Server 2022

- Multi-Cloud Support - AWS (AMIs), Azure (Managed Images), GCP (Compute Images), VMware vSphere

- Visual Builder - Create Packer templates without writing HCL code

- HCL Editor - Advanced template editing with validation and Git integration

- Image Catalog - Centralized registry of built images with search and filtering

- STIG Hardening - Automated DISA STIG compliance built into templates

- Template Library - Import and share templates across projects

Compliance & Security

Enterprise-grade compliance management built into the platform:

- STIG Viewer - Interactive compliance finding management with status tracking

- Policy Packs - Curated Ansible playbooks for automated remediation

- Remediation Coverage - Track automated vs manual findings with coverage metrics

- Manual Task Assignment - Bulk assign templates to manual findings for automation

- Multiple Frameworks - Import multiple compliance standards per project (STIG, CIS, NIST, PCI-DSS)

- CKL Export - Generate STIG checklists for certification and reporting

- OpenSCAP Integration - SCAP content management and automated compliance scanning

- Finding Management - Track status (NotAFinding, Open, NotApplicable), attach screenshots, add comments

- Screenshot Attachments - Document compliance evidence inline

Enterprise Features

Built for teams and organizations:

- RBAC - Fine-grained role-based access control (Owner, Manager, Task Runner, Reporter, Guest)

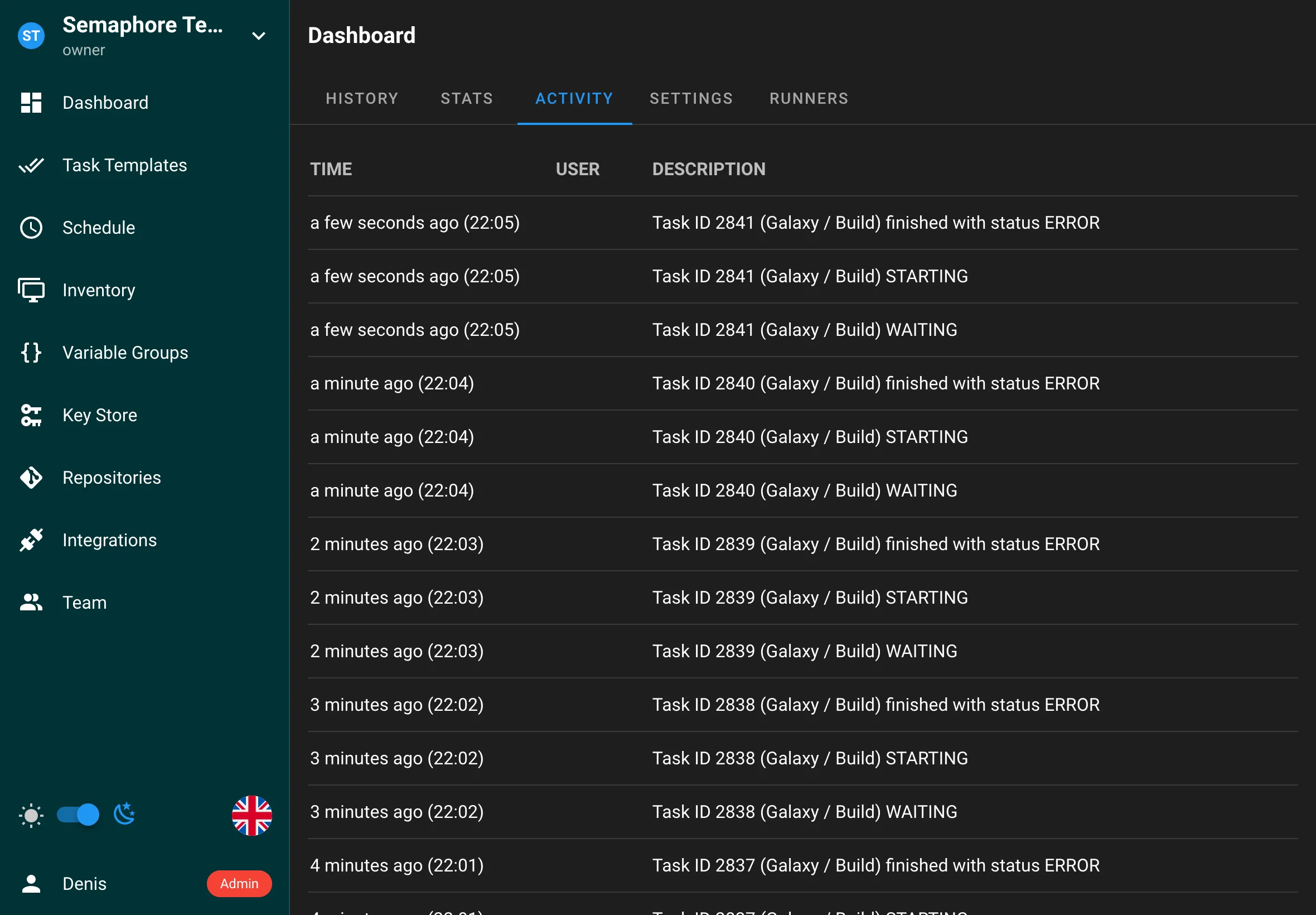

- Audit Logging - Complete audit trail of all actions and changes

- Multi-Project - Isolated project workspaces for teams and environments

- Secret Management - Encrypted credential storage with HashiCorp Vault integration

- LDAP/OpenID Connect - Enterprise authentication with support for 10+ providers

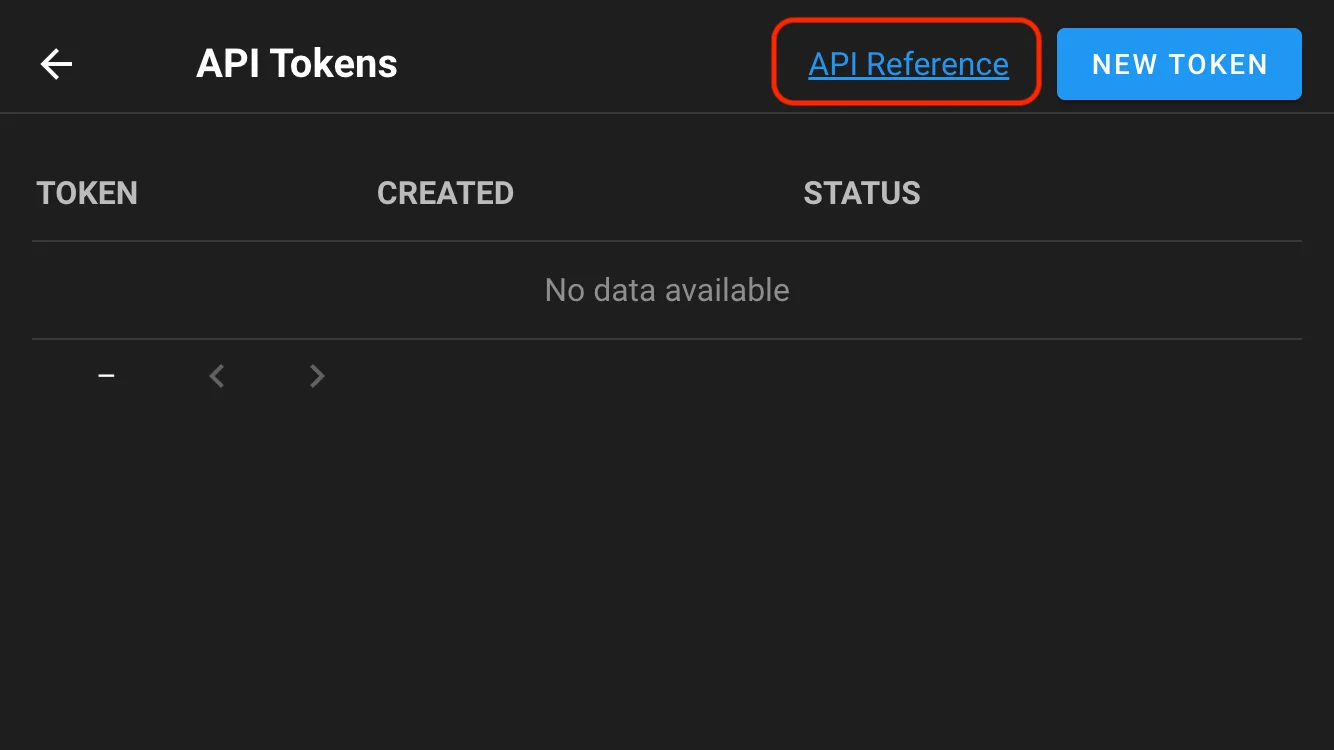

- API-First - Full REST API for automation and integration

- Session Management - Configurable session timeouts with inactivity-based logout

- TLS 1.3 - Modern encryption with automatic certificate management

Bare Metal Automation

Deploy and manage physical servers:

- PXE Boot Deployment - Network-based installation with kickstart/preseed

- ISO Installation - Custom bootable ISOs with embedded configuration

- Golden Image Deployment - Deploy pre-built STIG-hardened images to bare metal

- BMC Management - Out-of-band management for Dell iDRAC, HP iLO, and Redfish-compatible systems

- GigaIO FabreX Integration - Composable infrastructure management for dynamic resource allocation

Infrastructure Import

Bring existing infrastructure into code:

- Terraformer Integration - Import existing infrastructure from AWS, Azure, GCP, VMware, Kubernetes

- Resource Selection - Choose specific resource types and regions

- Tag Filtering - Import only resources matching specific tags

- Template/Repository Output - Save as executable templates or Git repositories

- State Generation - Automatic Terraform state file generation

Linux Service Installer

Streamlined installation for Linux servers:

- Systemd Service - Automatic service installation on Ubuntu, RHEL, Rocky, Alma, SLES

- Encrypted Configuration - Secrets stored in

/etc/forge/config.encwith automatic key management - Automated TLS - Built-in Let's Encrypt provisioning with certbot (self-signed fallback)

- Vault Integration - HashiCorp Vault installed, initialized, and configured automatically

- Dependency Bootstrap - Required CLI tools (Ansible, OpenSCAP, QEMU) auto-installed per distribution

Architecture

Forge is built with a modern, cloud-native architecture:

- Backend: Go-based API server with RESTful endpoints

- Frontend: Vue.js web application with responsive design

- Database: SQLite (default), PostgreSQL, MySQL, or BoltDB support

- Storage: Local file system or cloud storage for uploads and logs

- Secrets: HashiCorp Vault or local encryption for credential storage

- Deployment: Single binary, Docker container, or Kubernetes deployment

System Requirements

Minimum:

- CPU: 2 cores

- RAM: 2 GB

- Disk: 10 GB free space

- OS: Linux (x64, ARM64), macOS, Windows (via WSL)

Recommended (Production):

- CPU: 4+ cores

- RAM: 4+ GB

- Disk: 50+ GB free space (for logs, task files, images)

- Database: PostgreSQL or MySQL for multi-user environments

- Network: HTTPS with valid certificate

Use Cases

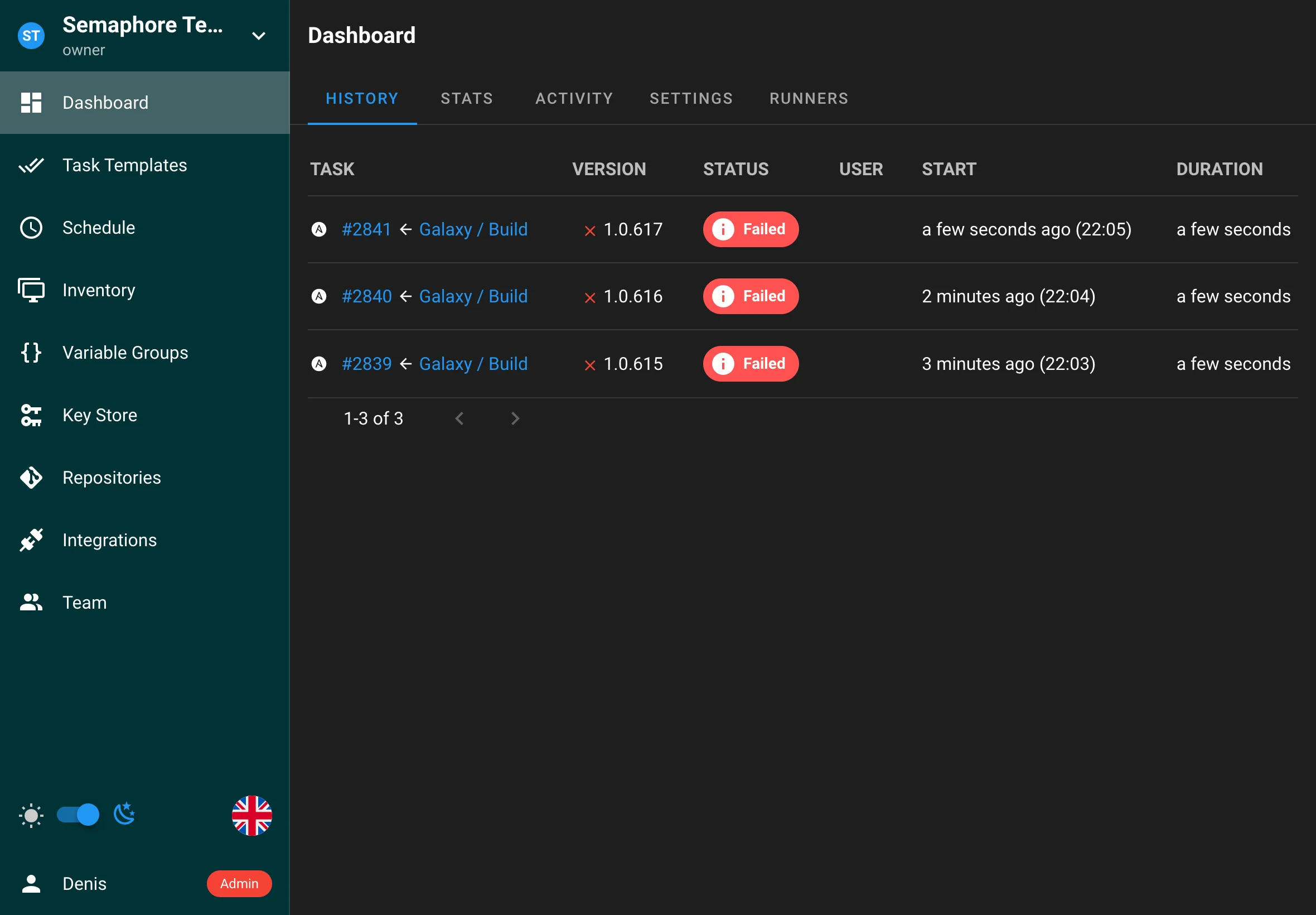

DevOps Teams

Run Ansible playbooks, deploy Terraform infrastructure, and manage infrastructure as code from a single unified interface. Schedule tasks, manage credentials securely, and collaborate across teams.

Security & Compliance Teams

Import STIG checklists, track compliance findings, automate remediation with Policy Packs, and export CKL files for certification. Manage multiple compliance frameworks in one place.

Infrastructure Teams

Build golden images with Packer, deploy to multiple cloud providers, import existing infrastructure with Terraformer, and manage bare metal servers with BMC integration.

Platform Engineering

Provide self-service infrastructure automation to development teams, manage secrets with Vault, and maintain compliance across all infrastructure deployments.

Quick Start

1. Installation

Choose your preferred installation method:

- Linux Service Installer - Recommended for Linux servers (Ubuntu, RHEL, Rocky, Alma, SLES)

- Docker - Fast setup with containers

- Binary File - Manual installation

- Kubernetes - Helm chart deployment

- Cloud Platforms - AWS, Azure, GCP guidance

2. Initial Setup

- Access the web UI at

http://localhost:3000(or your configured address) - Create an admin user during initial setup

- Configure database connection (SQLite is default)

- Complete basic configuration

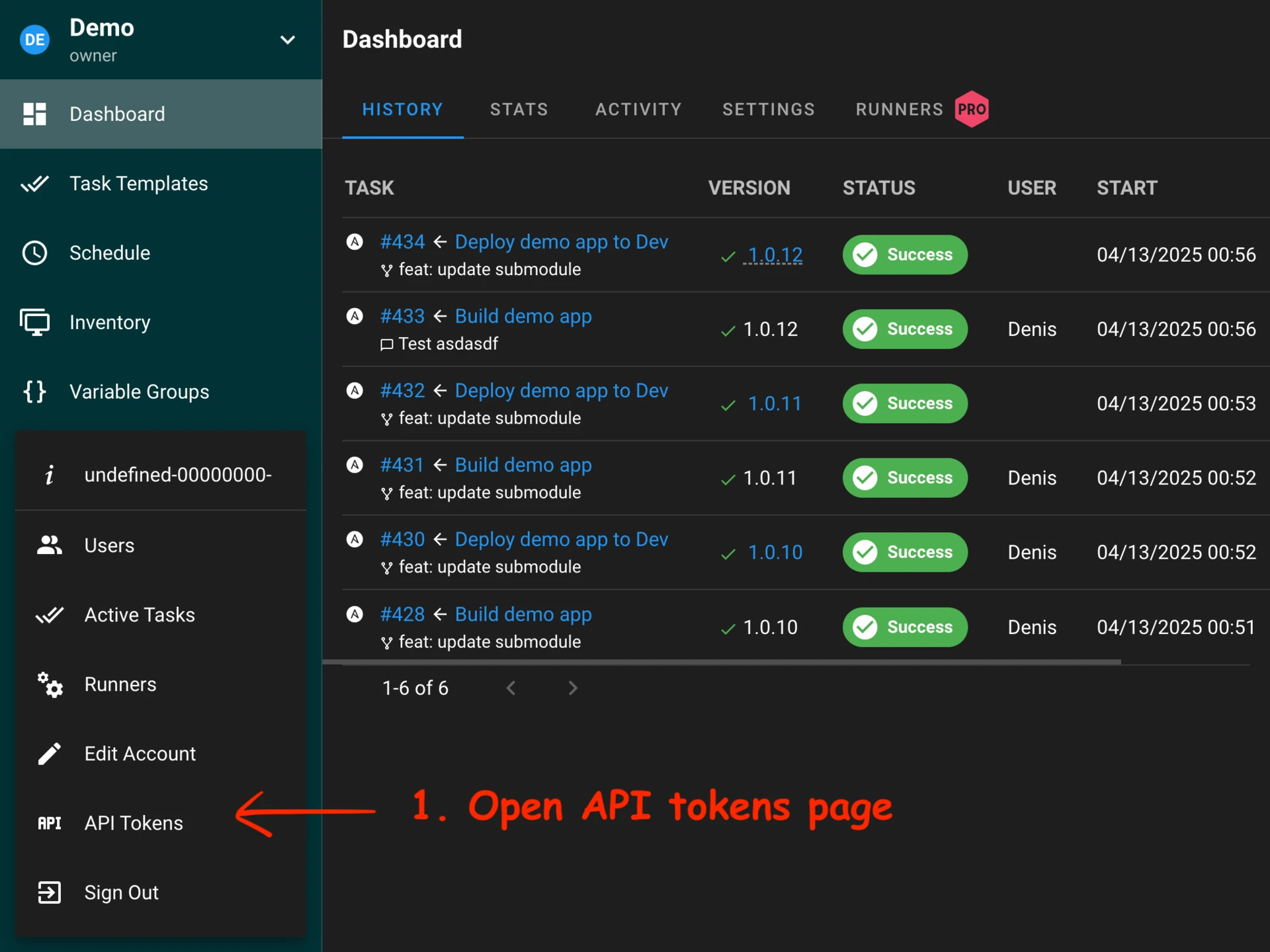

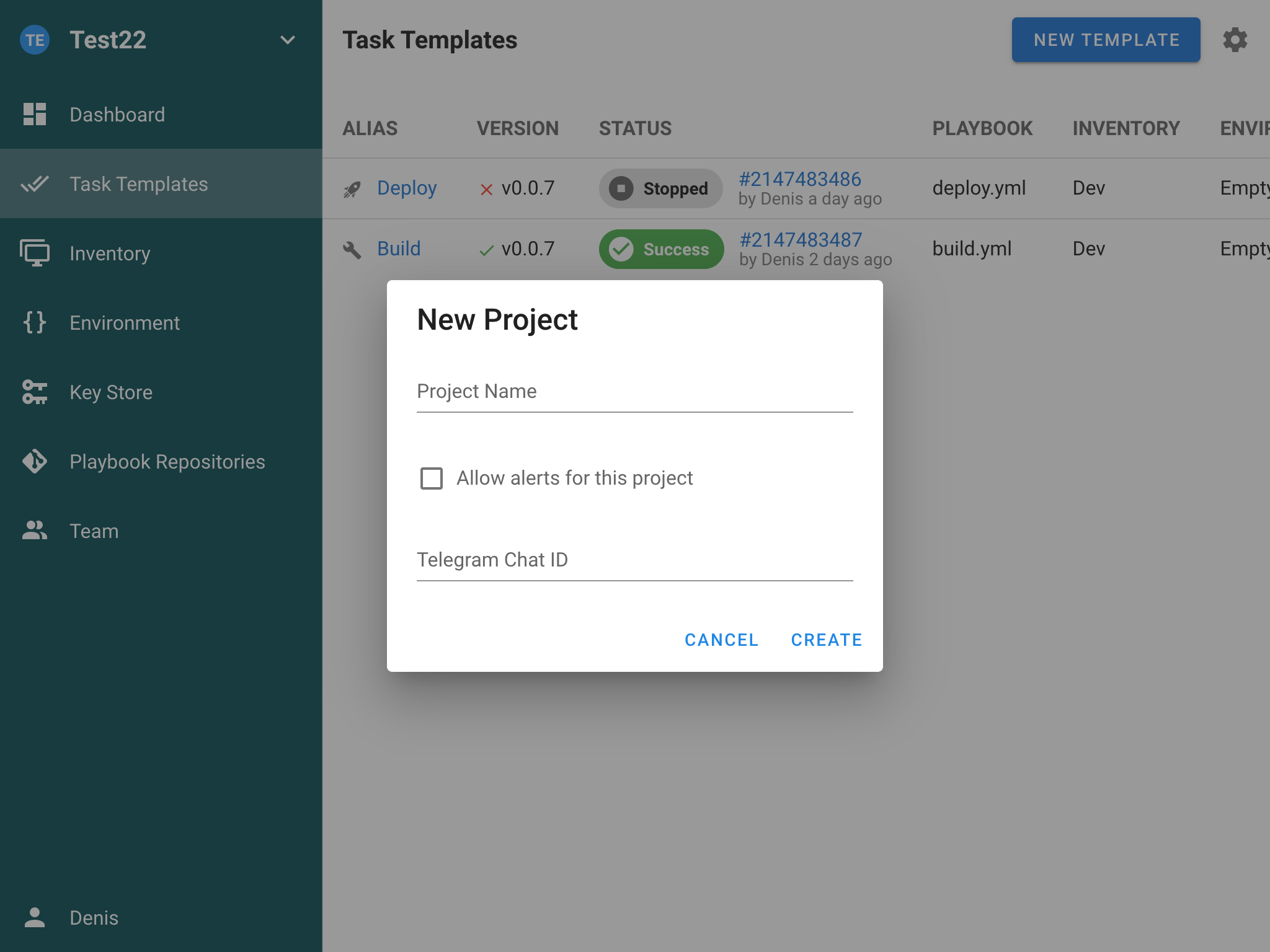

3. Create Your First Project

- Create a new project

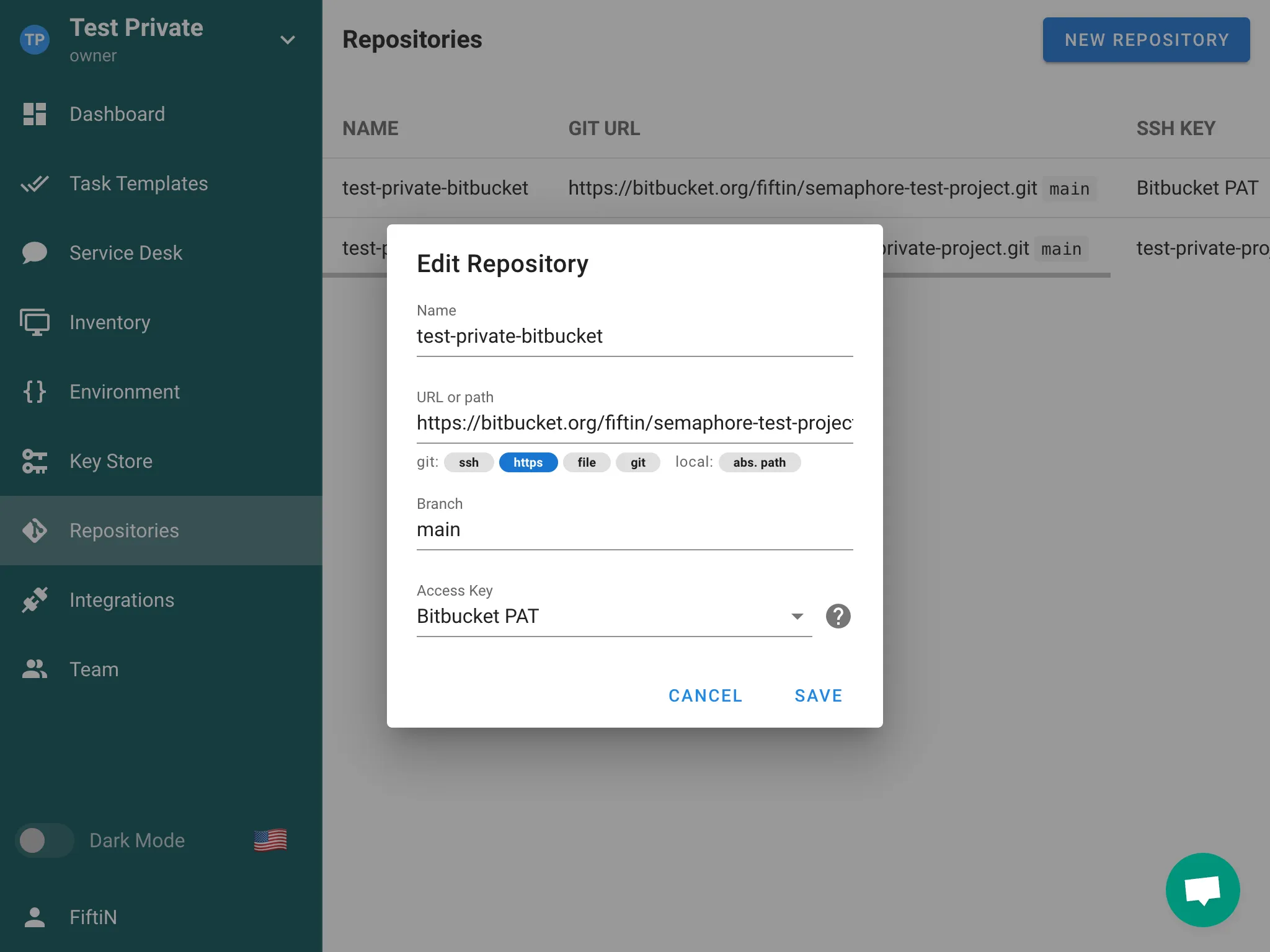

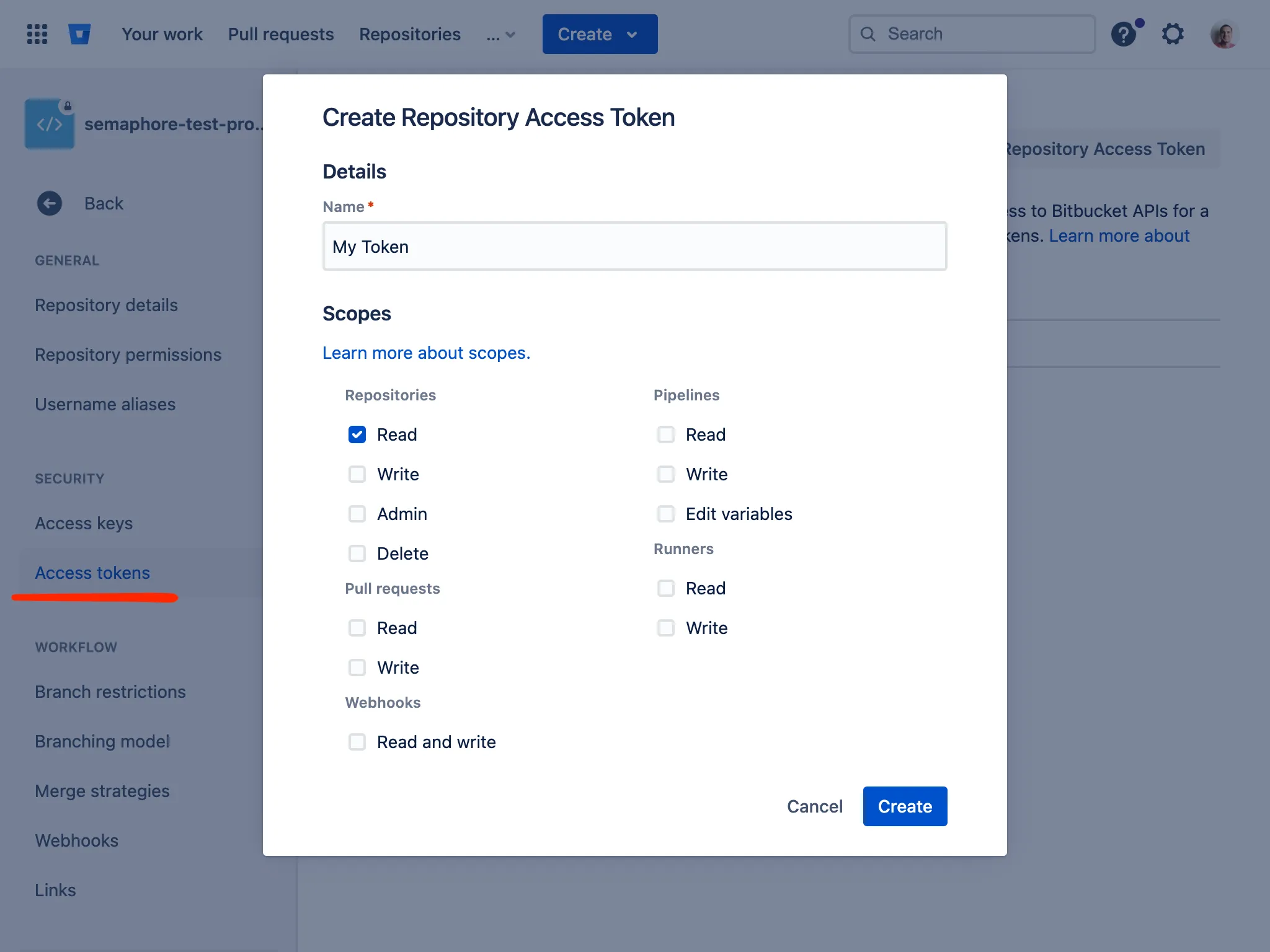

- Add credentials to Key Store (SSH keys, cloud credentials)

- Add an inventory with target hosts

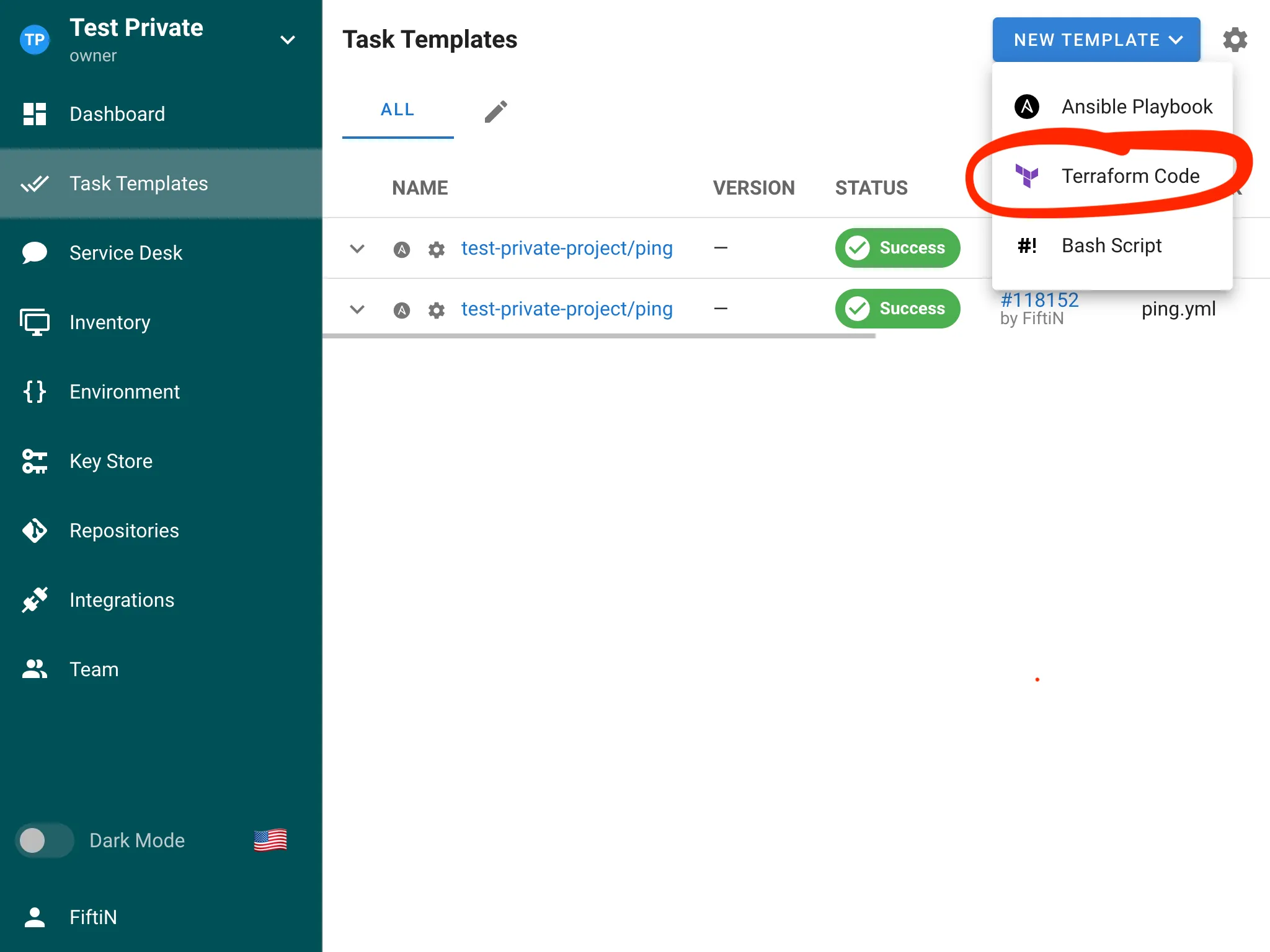

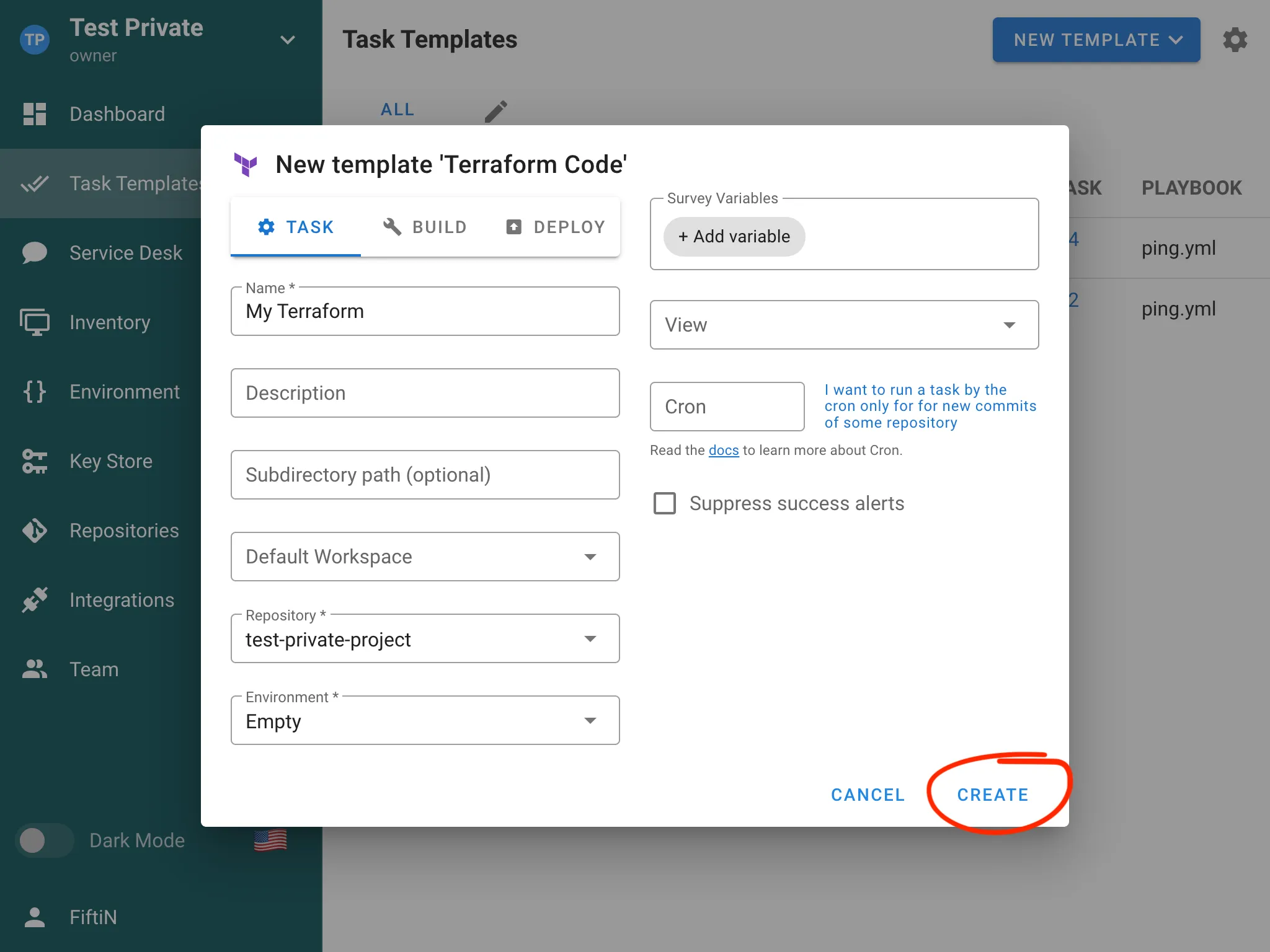

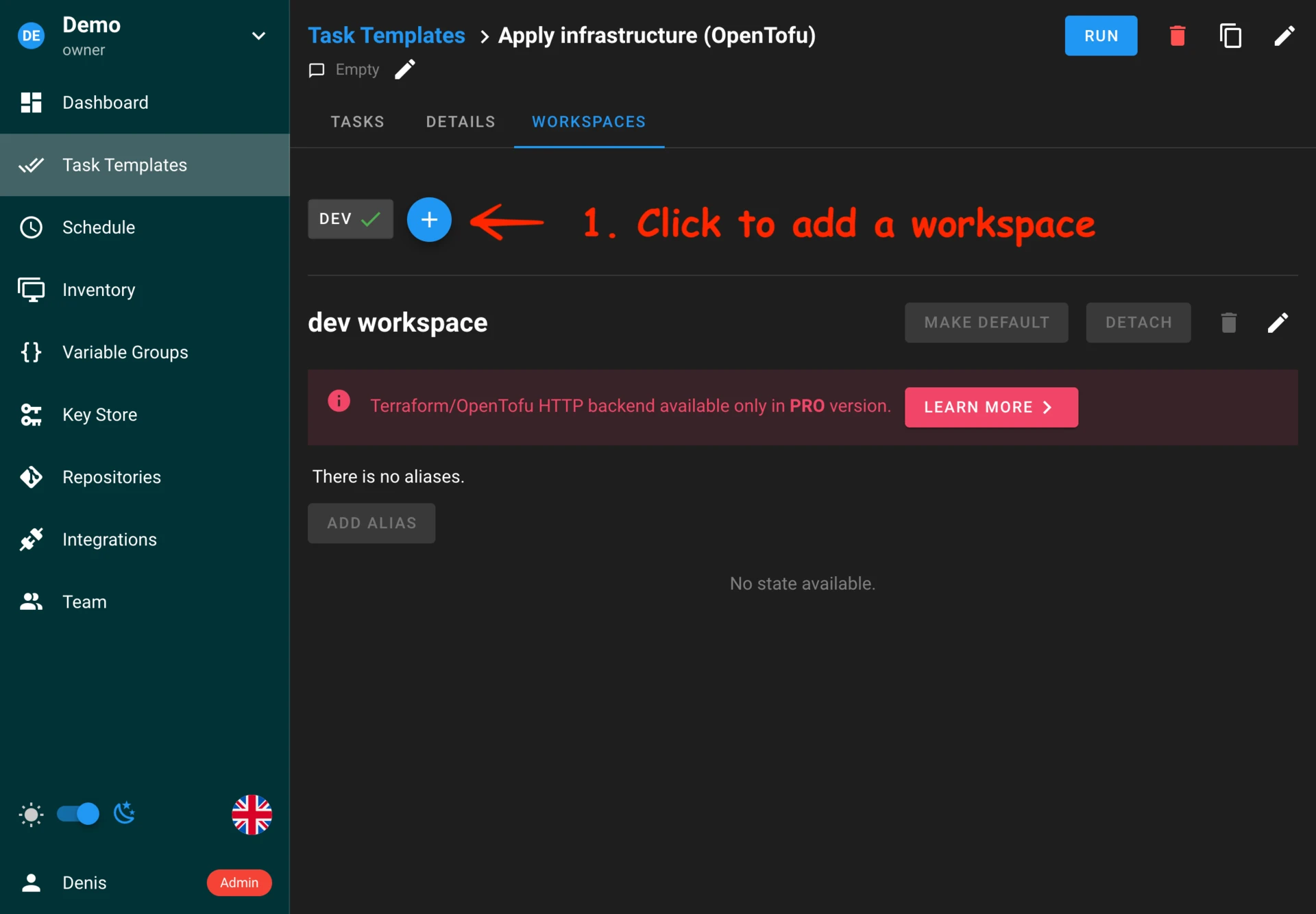

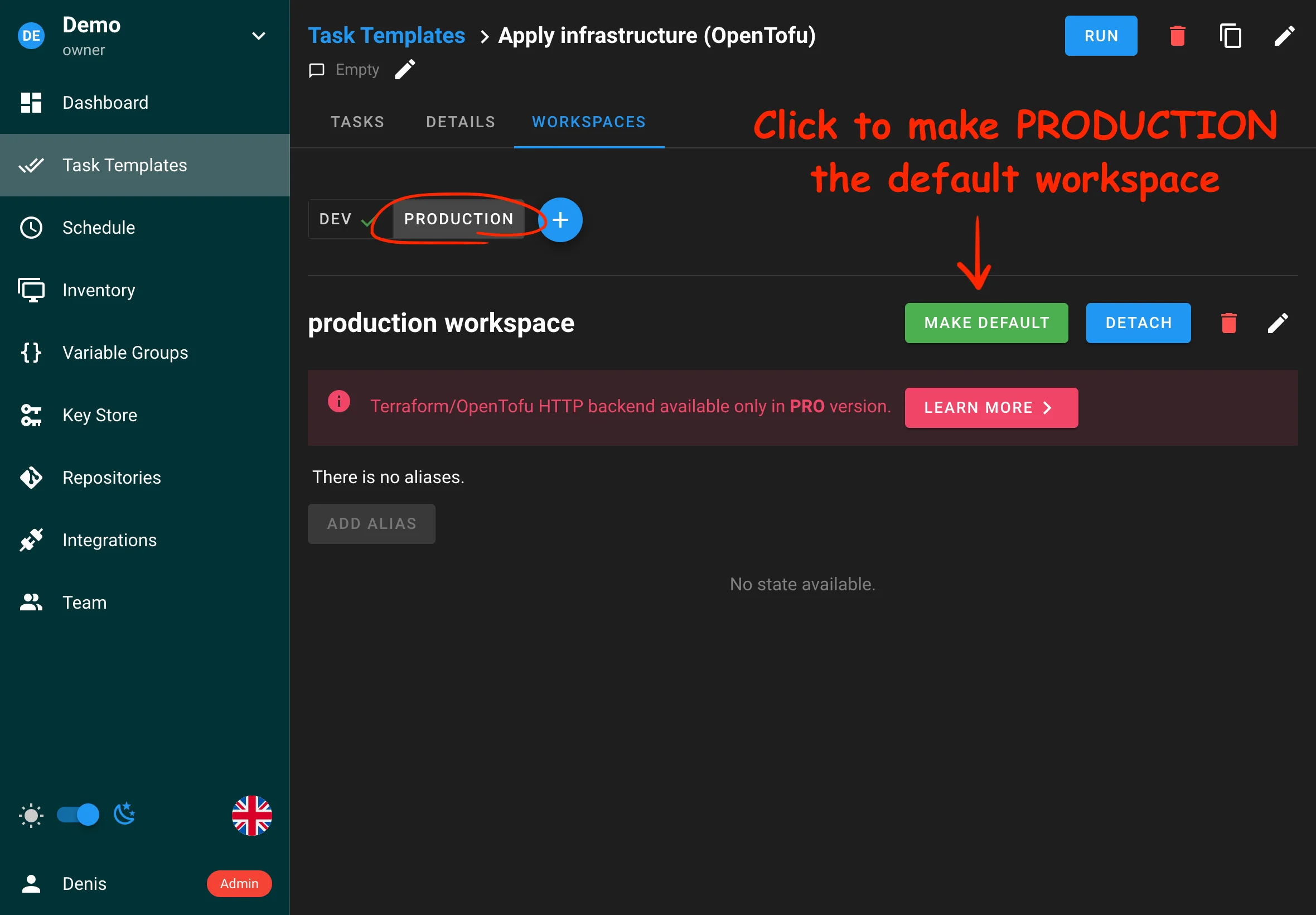

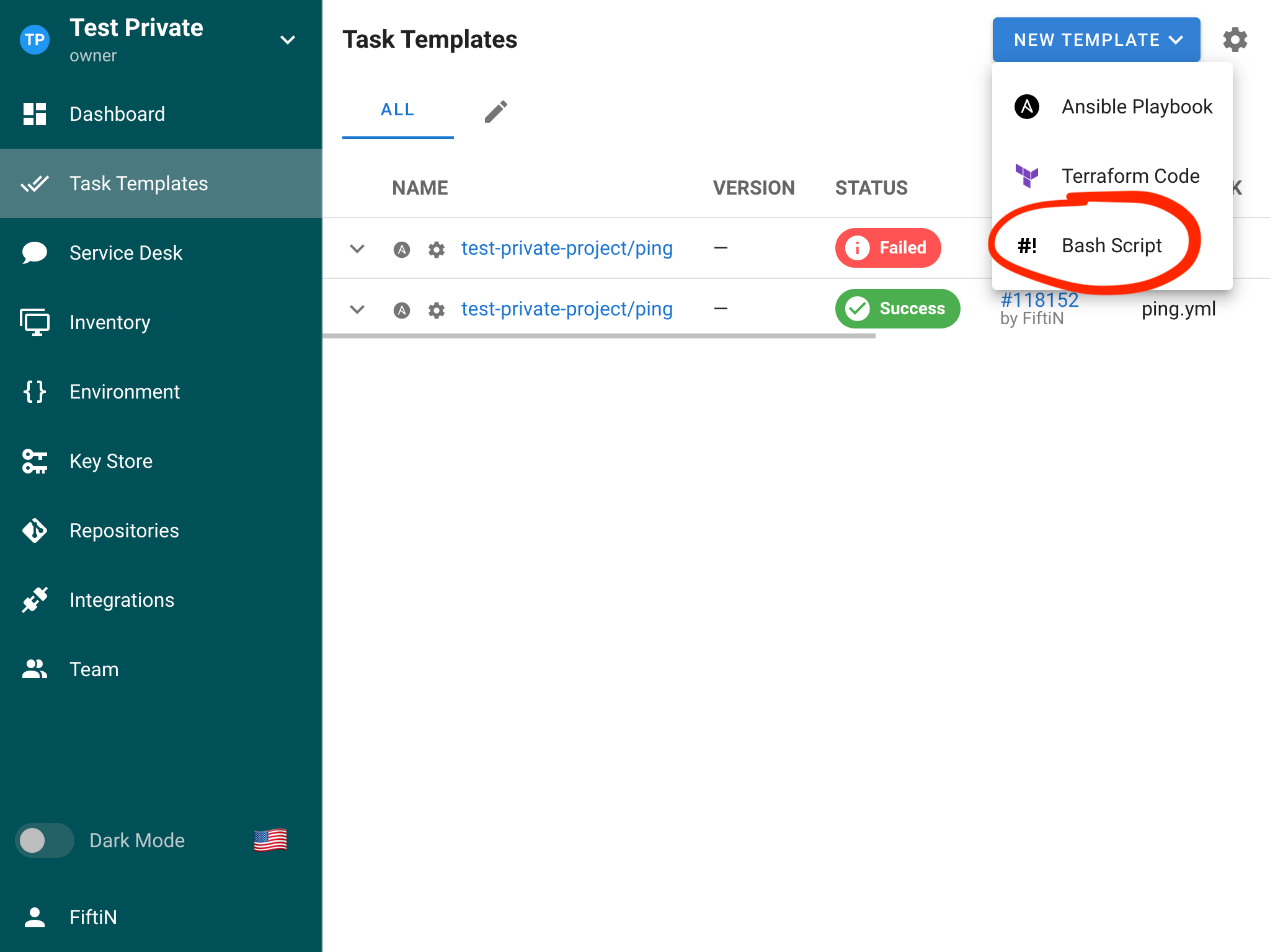

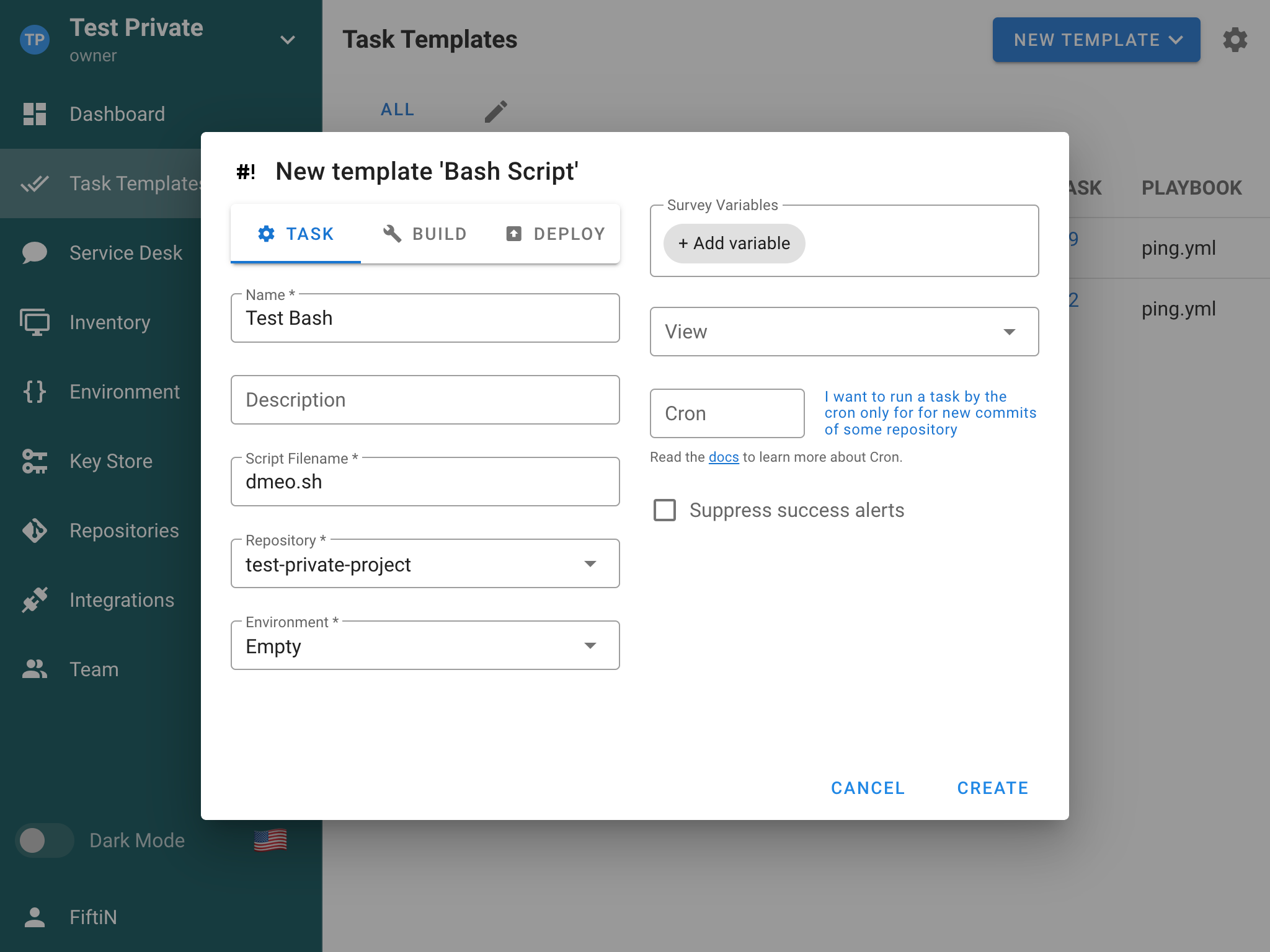

- Create a task template (Ansible, Terraform, Shell, etc.)

- Run your first task!

For detailed steps, see the Getting Started Guide.

Key Concepts

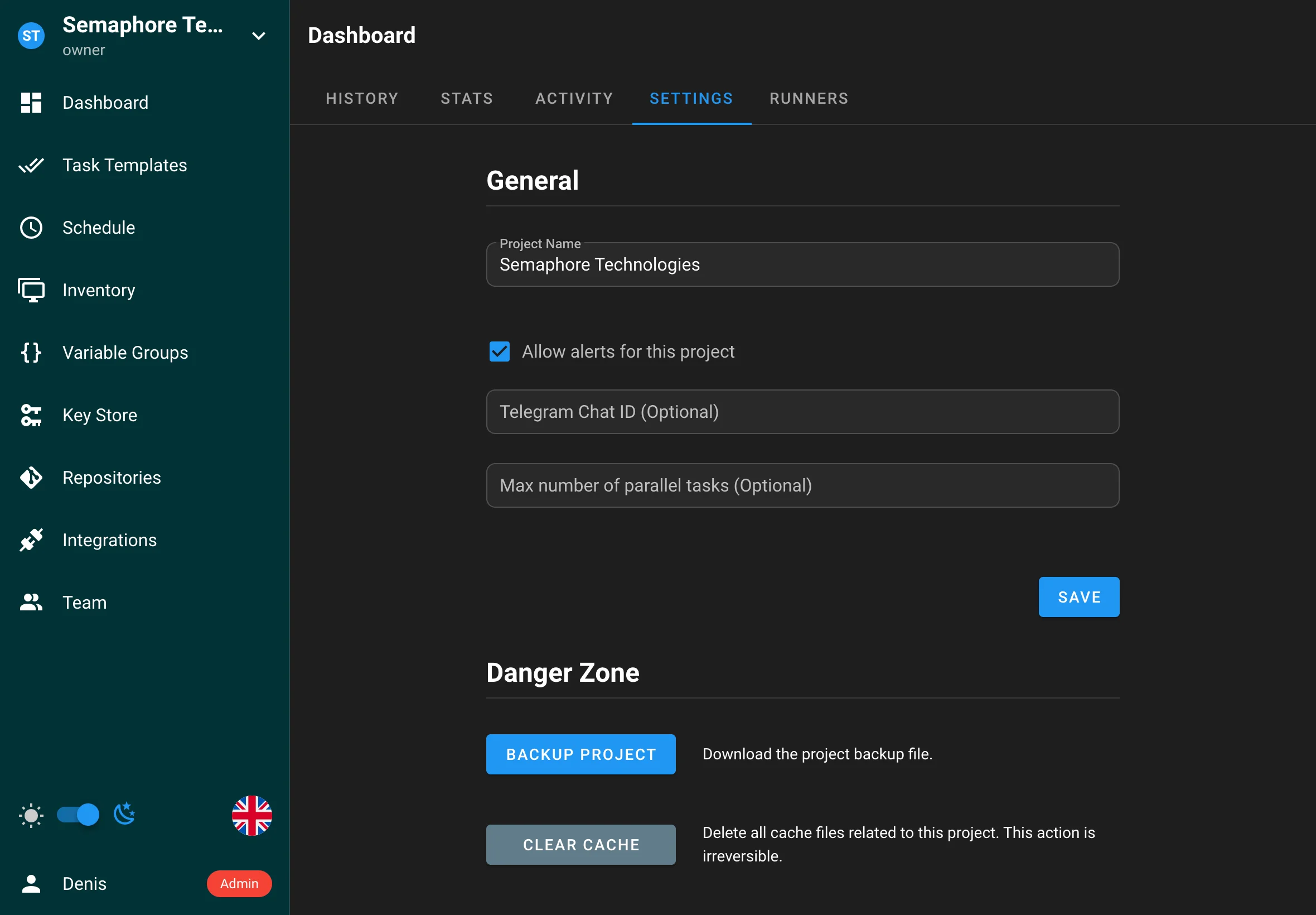

- Projects - Collections of related resources, configurations, and tasks

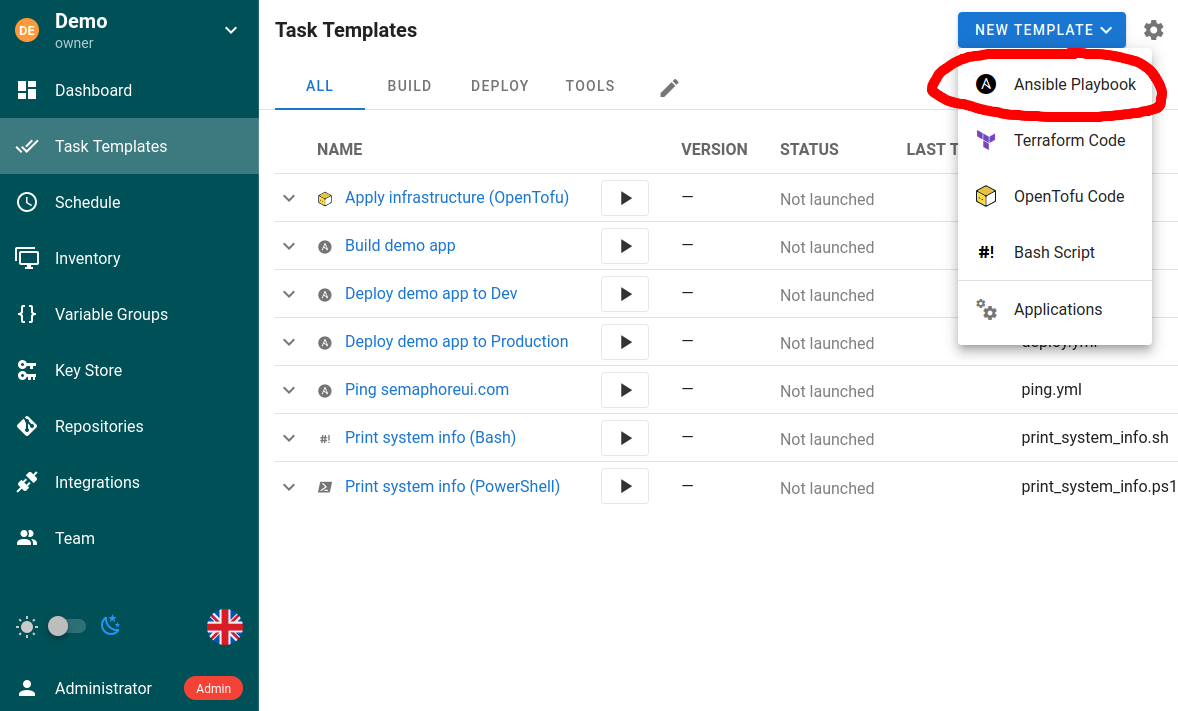

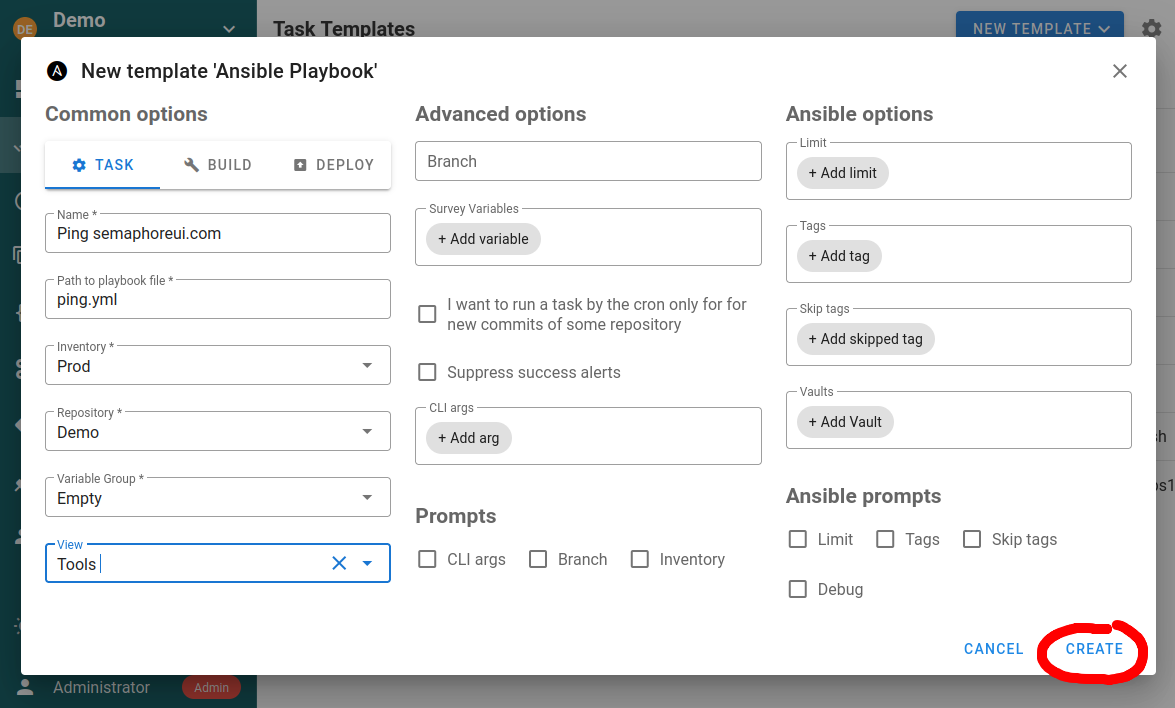

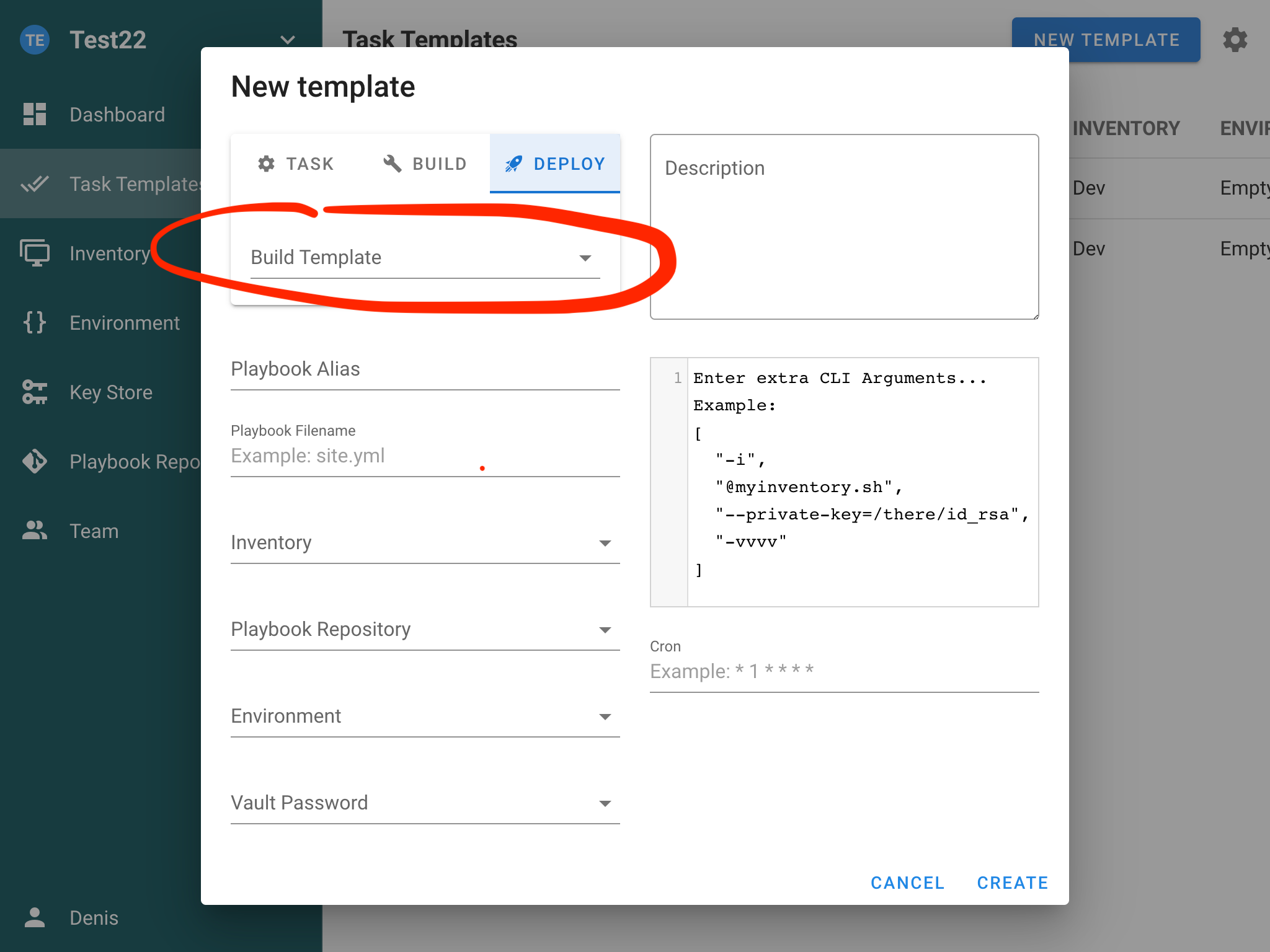

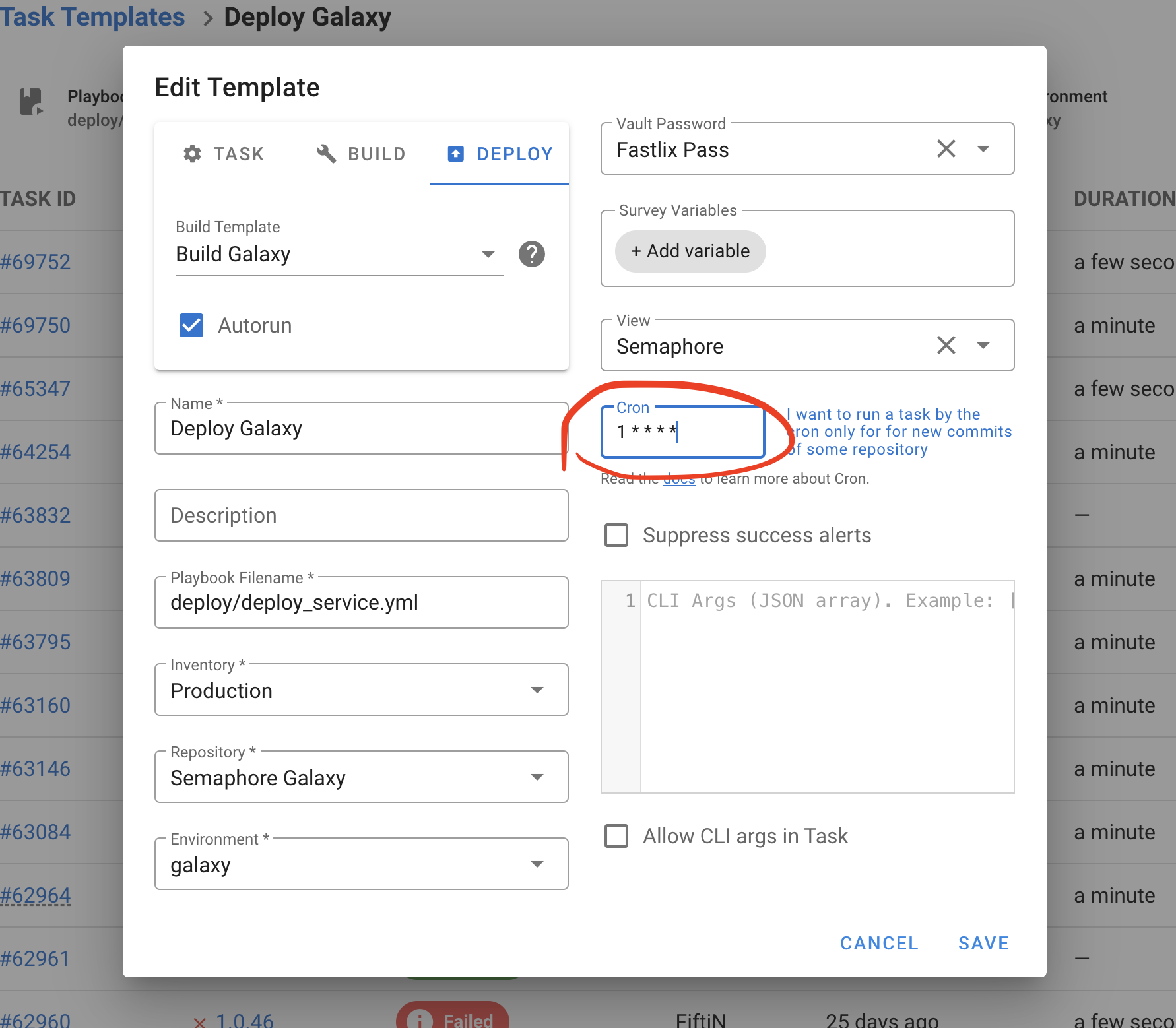

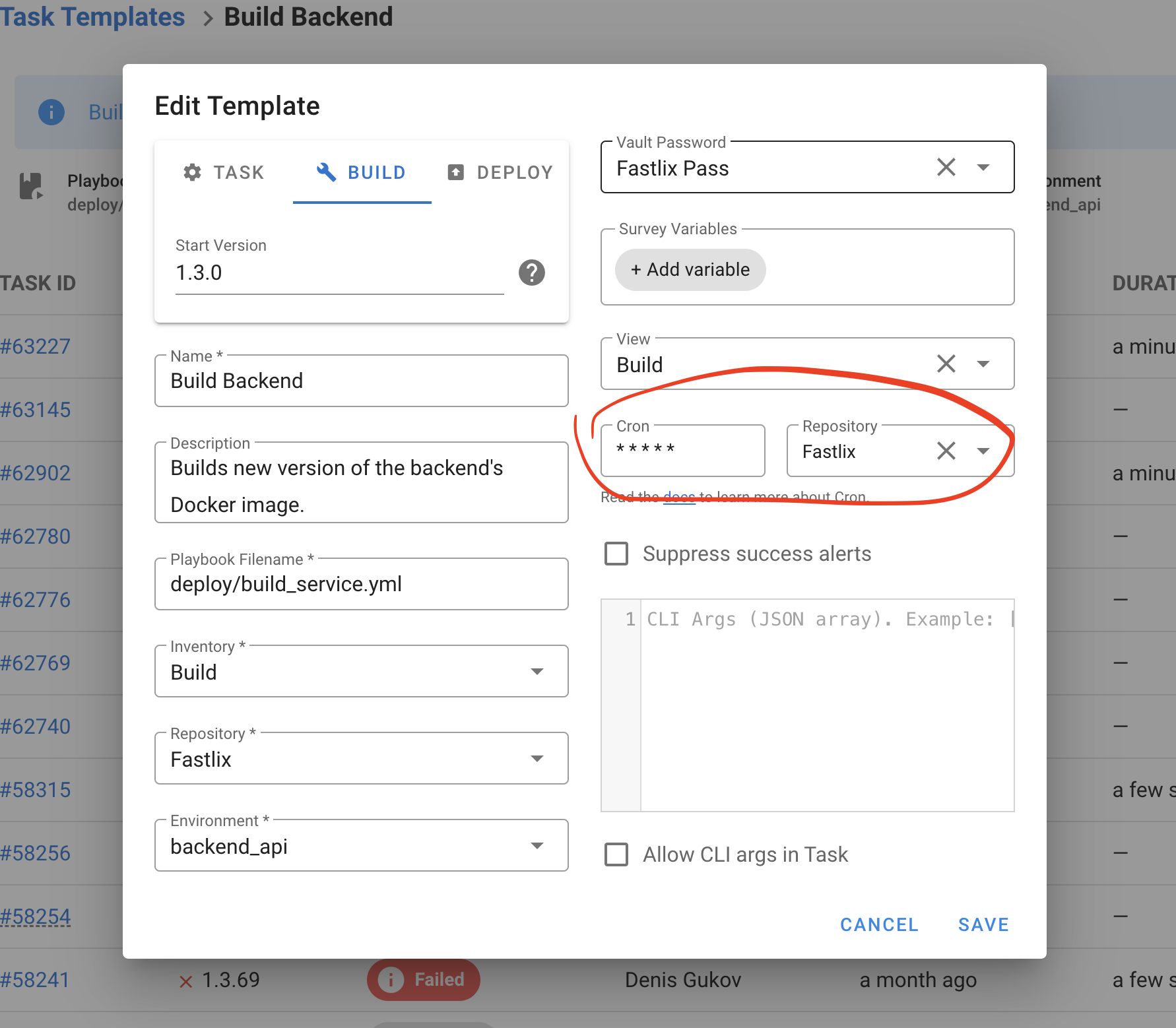

- Task Templates - Reusable definitions of tasks that can be executed on demand or scheduled

- Tasks - Specific instances of jobs or operations executed by Forge

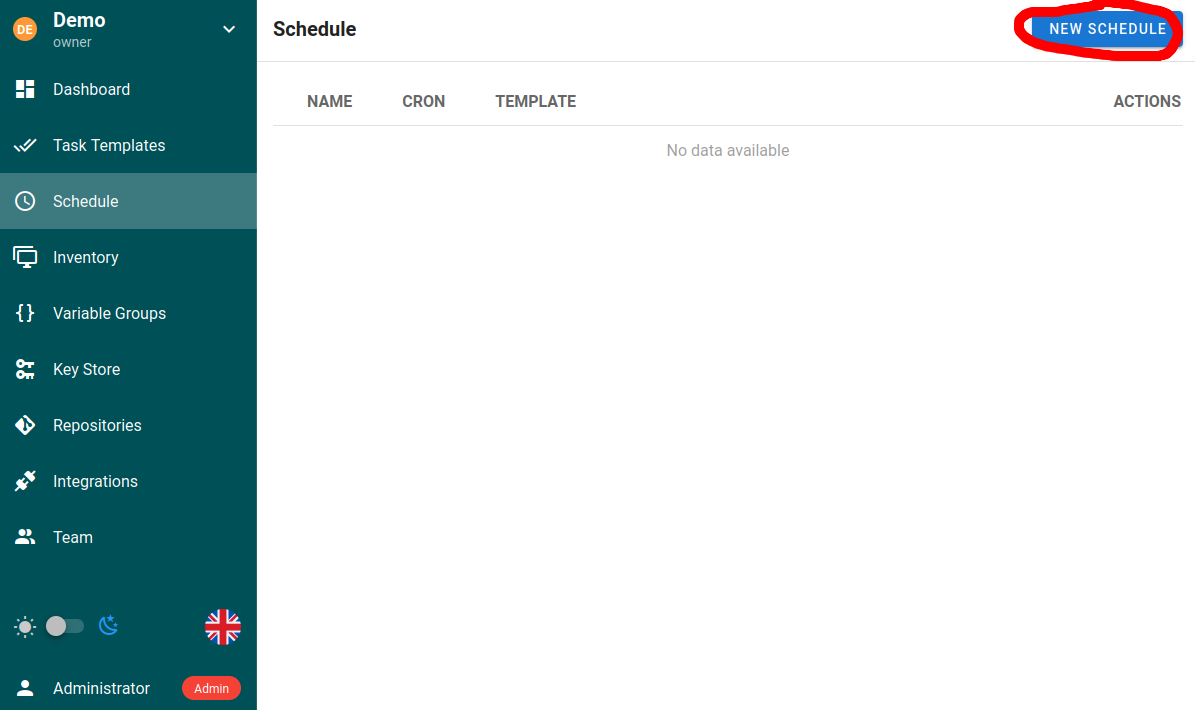

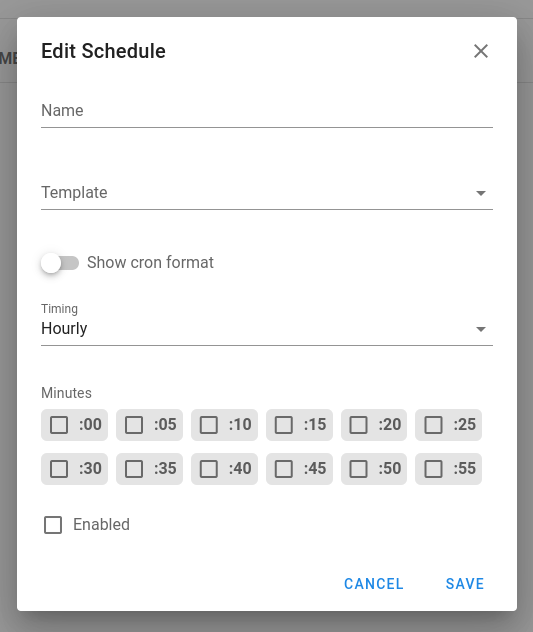

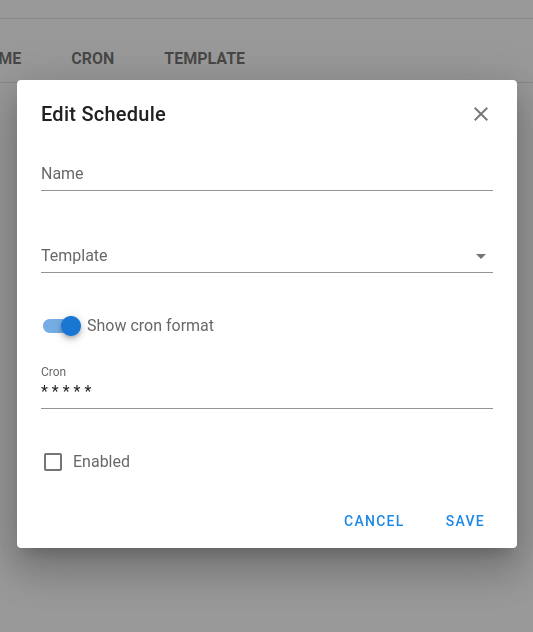

- Schedules - Automate task execution at specified times or intervals

- Inventory - Collections of target hosts (servers, VMs, containers) on which tasks will be executed

- Variable Groups - Configuration contexts that hold sensitive information such as environment variables and secrets

- Compliance Frameworks - Imported compliance standards (STIG, CIS, NIST, PCI-DSS) with findings and remediation tracking

- Golden Images - Pre-configured, hardened VM/AMI images built with Packer

Database Support

Forge supports multiple database backends:

- SQLite (Default) - Single-file database, zero configuration, perfect for development and small-medium deployments

- PostgreSQL - Recommended for enterprise deployments and high availability

- MySQL - Supported for existing MySQL infrastructure

- BoltDB - Embedded key/value database for simple deployments

Why SQLite is the Default

- ✅ Zero configuration - just one file

- ✅ Full feature parity with PostgreSQL/MySQL

- ✅ Enterprise features (Vault integration, Secret Storage, etc.)

- ✅ Perfect for teams up to 50 users

- ✅ Easy backups (copy the file)

- ✅ No separate database server needed

Documentation

Getting Started

- Getting Started Guide - Step-by-step introduction

- Installation Guide - Installation options

- Configuration Guide - Configure Forge

User Guides

- User Guide - Day-to-day usage and features

- Compliance Management - STIG and compliance workflows

- Golden Images - Build and manage images

- Bare Metal Automation - Physical server deployment

Administration

- Administration Guide - Installation, configuration, security

- Security Guide - Secure your installation

- Authentication - LDAP and OpenID setup

- API Reference - REST API documentation

Support

- FAQ - Common questions and troubleshooting

- Troubleshooting - Resolve common issues

Links

- Source Code: https://github.com/Digital-Data-Co/forge

- Issue Tracking: https://github.com/Digital-Data-Co/forge/issues

- Docker Images: https://ghcr.io/digital-data-co/forge

- Contact: contact@digitaldata.co

License

Forge is open-source software. See the LICENSE file for details.

Ready to get started? Check out the Installation Guide or Getting Started Guide.

Administration Guide

Welcome to the Forge Administration Guide. This guide provides comprehensive information for installing, configuring, and maintaining your Forge instance.

What is Forge?

Forge is a modern, open-source web interface for running automation tasks with enterprise-grade compliance features. It is designed to be a lightweight, fast, and easy-to-use alternative to more complex automation platforms.

It allows you to securely manage and execute tasks for:

- Ansible playbooks

- Terraform/OpenTofu infrastructure-as-code

- Terragrunt and Terramate for Terraform orchestration

- Packer for golden image building

- Pulumi for modern IaC

- PowerShell and Shell scripts

- Python scripts

Core Features & Philosophy

Understanding Forge's design principles can help you get the most out of it:

-

Lightweight and Performant: Forge is written in Go and distributed as a single binary file. It has minimal resource requirements (CPU/RAM) and does not require external dependencies like Kubernetes, Docker, or a JVM. This makes it fast, efficient, and easy to deploy.

-

Simple to Install and Maintain: You can get Forge running in minutes. The Linux Service Installer provides automated setup with systemd service installation, TLS configuration, and Vault integration. Installation can be as simple as downloading the binary and running it. The simple architecture makes upgrades and maintenance straightforward.

-

Flexible Deployment: Run it as a binary, as a systemd service, or in a Docker container. It's suitable for everything from a personal homelab to enterprise environments.

-

Self-Hosted and Secure: Forge is a self-hosted solution. All your data, credentials, and logs remain on your own infrastructure, giving you full control. Credentials are always encrypted in the database. HashiCorp Vault integration is available for advanced secret management.

-

Enterprise Compliance: Built-in support for DISA STIG compliance, OpenSCAP scanning, policy packs, and multiple compliance frameworks (CIS, NIST, PCI-DSS).

-

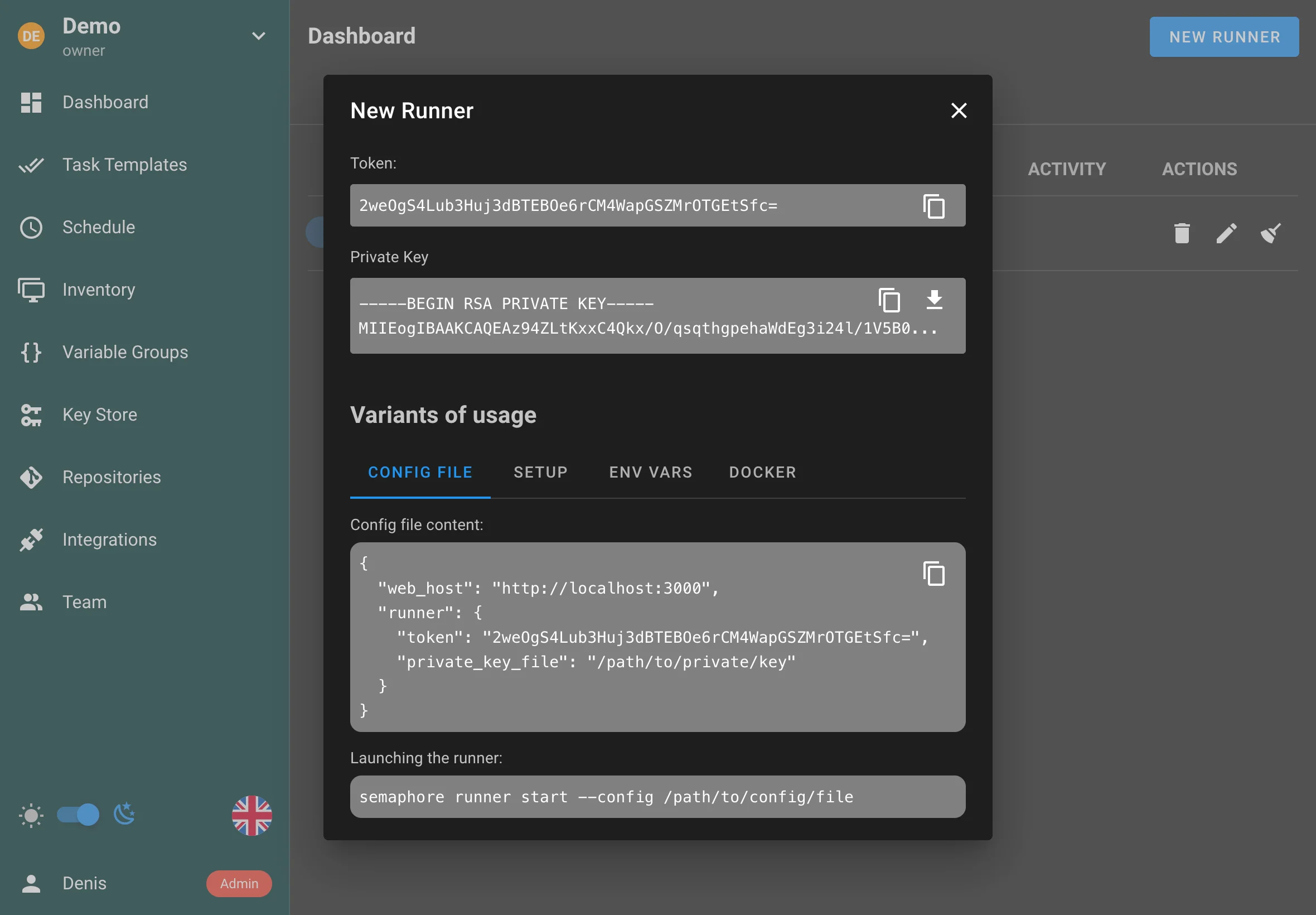

Powerful Integrations: While simple, Forge supports powerful features like LDAP/OpenID authentication, detailed role-based access control (RBAC) per project, remote runners for scaling out task execution, golden image management with Packer, infrastructure import with Terraformer, and a full REST API for programmatic access.

Quick Links

Installation

- Overview

- Linux Service Installer - Recommended for Linux servers

- Docker

- Binary File

- Kubernetes (Helm chart)

- Cloud Platforms

Configuration

Database

Security

Authentication

Secret Management

Operations

- System Binaries - Manage Packer, Terraform, Ansible, etc.

- Runners - Remote execution agents

- CLI Tools - Command-line administration

- Logging - Log management and analysis

- Notifications - Email, Slack, Teams, etc.

- API - REST API reference

Maintenance

Installation

You can install Forge in multiple ways, depending on your operating system, environment, and preferences:

Installation Methods

Linux Service Installer (Recommended for Linux)

The Linux Service Installer provides the most complete setup experience for Linux servers. It automatically:

- Installs Forge as a systemd service

- Configures encrypted configuration storage

- Sets up TLS with Let's Encrypt (or self-signed fallback)

- Installs and configures HashiCorp Vault

- Installs required dependencies (Ansible, OpenSCAP, QEMU, etc.)

Supported Distributions:

- Ubuntu 20.04, 22.04, 24.04

- Red Hat Enterprise Linux 8, 9

- Rocky Linux 8, 9

- AlmaLinux 8, 9

- SUSE Linux Enterprise Server 15+

Docker

Run Forge as a container using Docker or Docker Compose. Ideal for fast setup, sandboxed environments, and CI/CD pipelines. Recommended for users who prefer infrastructure as code.

Features:

- Pre-built images with all dependencies

- STIG-hardened container images

- Read-only filesystem support

- Easy backup and restore

Binary File

Download a precompiled binary from the releases page. Great for manual installation or embedding in custom workflows. Works across Linux, macOS, and Windows (via WSL).

Use Cases:

- Quick testing and evaluation

- Custom deployment scripts

- Embedded in other systems

Kubernetes (Helm Chart)

Deploy Forge into a Kubernetes cluster using Helm. Best suited for production-grade, scalable infrastructure. Supports easy configuration and upgrades via Helm values.

Features:

- High availability support

- Horizontal scaling

- Persistent storage

- Service mesh integration

Cloud Platforms

Guidance for deploying Forge to cloud platforms using VMs, containers, or Kubernetes with managed services.

Supported Platforms:

- AWS (EC2, ECS, EKS)

- Azure (VM, Container Instances, AKS)

- Google Cloud Platform (Compute Engine, GKE)

- Other cloud providers

System Requirements

Minimum Requirements

- CPU: 2 cores

- RAM: 2 GB

- Disk: 10 GB free space

- OS: Linux (x64, ARM64), macOS, Windows (via WSL)

Recommended for Production

- CPU: 4+ cores

- RAM: 4+ GB

- Disk: 50+ GB free space (for logs, task files, images)

- Database: PostgreSQL or MySQL for multi-user environments

- Network: HTTPS with valid certificate

Required Dependencies

Forge will automatically install these during Linux Service Installer setup:

- Ansible - For playbook execution

- OpenSCAP - For compliance scanning

- QEMU - For local Packer builds (optional)

- Git - For repository access

- Terraform/OpenTofu - Managed via System Binaries (optional)

- Packer - Managed via System Binaries (optional)

Post-Installation

After installation, you'll need to:

- Access the Web UI - Default:

http://localhost:3000 - Complete Initial Setup - Create admin user and configure database

- Configure Authentication - Set up LDAP or OpenID if needed

- Add System Binaries - Install Terraform, Packer, etc. via Admin Settings

- Create Your First Project - Start using Forge!

Next Steps

- Configuration Guide - Configure Forge for your environment

- Security Guide - Secure your installation

- User Guide - Learn how to use Forge

Linux Service Installer

The Linux Service Installer is the recommended installation method for Linux servers. It provides automated setup with systemd service installation, TLS configuration, Vault integration, and dependency management.

Supported Distributions

- Ubuntu: 20.04, 22.04, 24.04

- Red Hat Enterprise Linux: 8, 9

- Rocky Linux: 8, 9

- AlmaLinux: 8, 9

- SUSE Linux Enterprise Server: 15+

Features

The Linux Service Installer automatically:

- ✅ Installs Forge as a systemd service

- ✅ Configures encrypted configuration storage (

/etc/forge/config.enc) - ✅ Sets up TLS with Let's Encrypt (or self-signed fallback)

- ✅ Installs and configures HashiCorp Vault

- ✅ Installs required dependencies (Ansible, OpenSCAP, QEMU, etc.)

- ✅ Configures automatic key management

- ✅ Sets up proper file permissions and security

Installation Steps

1. Download the Installer

Download the latest Forge binary for your platform:

# For x64 systems

wget https://github.com/Digital-Data-Co/forge/releases/download/v0.2.5/forge_Linux_x86_64.tar.gz

tar -xzf forge_Linux_x86_64.tar.gz

# For ARM64 systems

wget https://github.com/Digital-Data-Co/forge/releases/download/v0.2.5/forge_Linux_arm64.tar.gz

tar -xzf forge_Linux_arm64.tar.gz

2. Run the Installer

Execute the installer with appropriate permissions:

sudo ./forge install

The installer will:

- Detect your Linux distribution

- Install required system packages

- Create the

forgesystem user - Set up systemd service files

- Configure encrypted configuration storage

- Install and initialize HashiCorp Vault

- Set up TLS certificates (Let's Encrypt or self-signed)

- Install dependencies (Ansible, OpenSCAP, QEMU, etc.)

3. Complete Initial Setup

After installation, access the web UI:

# The service starts automatically

sudo systemctl status forge

# Access the web UI

# Default: http://localhost:3000

# Or use your server's IP/hostname

Navigate to the web UI and complete the initial setup wizard:

- Create an admin user

- Configure database connection

- Set up basic configuration

Configuration

Encrypted Configuration

The installer stores sensitive configuration in /etc/forge/config.enc with automatic key management. The encryption key is managed securely by the system.

TLS Configuration

The installer automatically configures TLS:

Let's Encrypt (Recommended):

- Automatically provisions certificates via certbot

- Auto-renewal configured

- Requires valid domain name and port 80/443 access

Self-Signed (Fallback):

- Generated automatically if Let's Encrypt fails

- Suitable for development/testing

- Browser warnings expected

HashiCorp Vault

Vault is automatically:

- Installed and initialized

- Unsealed and ready to use

- Integrated with Forge for secret storage

- Configured with proper permissions

System Dependencies

The installer automatically installs:

- Ansible - Latest version from distribution repositories

- OpenSCAP - For compliance scanning

- QEMU - For local Packer builds (if supported)

- Git - For repository access

- Other tools - As required by your distribution

Service Management

Start/Stop Service

sudo systemctl start forge

sudo systemctl stop forge

sudo systemctl restart forge

Enable/Disable Auto-Start

sudo systemctl enable forge

sudo systemctl disable forge

View Logs

# View service logs

sudo journalctl -u forge -f

# View recent logs

sudo journalctl -u forge -n 100

# View logs since boot

sudo journalctl -u forge -b

Service Status

sudo systemctl status forge

File Locations

After installation, files are organized as follows:

/etc/forge/

├── config.enc # Encrypted configuration

├── config.json # Non-sensitive configuration (if any)

└── vault/ # Vault data directory

/var/lib/forge/

├── database/ # Database files (SQLite default)

├── uploads/ # Uploaded files

├── logs/ # Application logs

└── tmp/ # Temporary files

/usr/local/bin/forge # Forge binary (if installed system-wide)

Upgrading

To upgrade Forge installed via the service installer:

# 1. Download new version

wget https://github.com/Digital-Data-Co/forge/releases/download/v0.2.6/forge_Linux_x86_64.tar.gz

tar -xzf forge_Linux_x86_64.tar.gz

# 2. Stop service

sudo systemctl stop forge

# 3. Backup configuration

sudo cp /etc/forge/config.enc /etc/forge/config.enc.backup

# 4. Replace binary

sudo cp forge /usr/local/bin/forge # Or wherever your binary is

# 5. Start service

sudo systemctl start forge

# 6. Verify

sudo systemctl status forge

Troubleshooting

Service Won't Start

# Check service status

sudo systemctl status forge

# Check logs for errors

sudo journalctl -u forge -n 50

# Verify configuration

sudo forge config validate

TLS Certificate Issues

# Check certbot status

sudo certbot certificates

# Renew certificate manually

sudo certbot renew

# Check nginx/apache configuration if using reverse proxy

Vault Issues

# Check Vault status

sudo systemctl status vault

# View Vault logs

sudo journalctl -u vault -f

# Re-initialize Vault (WARNING: loses data)

sudo forge vault init

Permission Issues

# Verify forge user exists

id forge

# Check file permissions

ls -la /etc/forge/

ls -la /var/lib/forge/

# Fix permissions if needed

sudo chown -R forge:forge /var/lib/forge

Uninstallation

To remove Forge installed via the service installer:

# 1. Stop and disable service

sudo systemctl stop forge

sudo systemctl disable forge

# 2. Remove service file

sudo rm /etc/systemd/system/forge.service

sudo systemctl daemon-reload

# 3. Remove files (optional - backup first!)

sudo rm -rf /etc/forge

sudo rm -rf /var/lib/forge

sudo rm /usr/local/bin/forge

# 4. Remove user (optional)

sudo userdel forge

Next Steps

- Configuration Guide - Configure Forge settings

- Security Guide - Secure your installation

- User Guide - Start using Forge

Docker

Create a docker-compose.yml file with following content:

services:

# uncomment this section and comment out the mysql section to use postgres instead of mysql

#postgres:

#restart: unless-stopped

#image: postgres:14

#hostname: postgres

#volumes:

# - semaphore-postgres:/var/lib/postgresql/data

#environment:

# POSTGRES_USER: semaphore

# POSTGRES_PASSWORD: semaphore

# POSTGRES_DB: semaphore

# if you wish to use postgres, comment the mysql service section below

mysql:

restart: unless-stopped

image: mysql:8.0

hostname: mysql

volumes:

- semaphore-mysql:/var/lib/mysql

environment:

MYSQL_RANDOM_ROOT_PASSWORD: 'yes'

MYSQL_DATABASE: semaphore

MYSQL_USER: semaphore

MYSQL_PASSWORD: semaphore

semaphore:

restart: unless-stopped

ports:

- 3000:3000

image: semaphoreui/semaphore:latest

environment:

SEMAPHORE_DB_USER: semaphore

SEMAPHORE_DB_PASS: semaphore

SEMAPHORE_DB_HOST: mysql # for postgres, change to: postgres

SEMAPHORE_DB_PORT: 3306 # change to 5432 for postgres

SEMAPHORE_DB_DIALECT: mysql # for postgres, change to: postgres

SEMAPHORE_DB: semaphore

# To use SQLite instead of MySQL/Postgres (v2.16+)

# SEMAPHORE_DB_DIALECT: sqlite

# SEMAPHORE_DB: "/etc/semaphore/semaphore.sqlite"

SEMAPHORE_PLAYBOOK_PATH: /tmp/semaphore/

SEMAPHORE_ADMIN_PASSWORD: changeme

SEMAPHORE_ADMIN_NAME: admin

SEMAPHORE_ADMIN_EMAIL: admin@localhost

SEMAPHORE_ADMIN: admin

SEMAPHORE_ACCESS_KEY_ENCRYPTION: gs72mPntFATGJs9qK0pQ0rKtfidlexiMjYCH9gWKhTU=

SEMAPHORE_LDAP_ACTIVATED: 'no' # if you wish to use ldap, set to: 'yes'

SEMAPHORE_LDAP_HOST: dc01.local.example.com

SEMAPHORE_LDAP_PORT: '636'

SEMAPHORE_LDAP_NEEDTLS: 'yes'

SEMAPHORE_LDAP_DN_BIND: 'uid=bind_user,cn=users,cn=accounts,dc=local,dc=shiftsystems,dc=net'

SEMAPHORE_LDAP_PASSWORD: 'ldap_bind_account_password'

SEMAPHORE_LDAP_DN_SEARCH: 'dc=local,dc=example,dc=com'

SEMAPHORE_LDAP_SEARCH_FILTER: "(\u0026(uid=%s)(memberOf=cn=ipausers,cn=groups,cn=accounts,dc=local,dc=example,dc=com))"

TZ: UTC

depends_on:

- mysql # for postgres, change to: postgres

volumes:

semaphore-mysql: # to use postgres, switch to: semaphore-postgres

You must specify following confidential variables:

MYSQL_PASSWORDandSEMAPHORE_DB_PASS— password for the MySQL user.SEMAPHORE_ADMIN_PASSWORD— password for the Forge's admin user.SEMAPHORE_ACCESS_KEY_ENCRYPTION— key for encrypting access keys in database. It must be generated by using the following command:head -c32 /dev/urandom | base64.

If you are using Docker Swarm, it is strongly recommended that you don't embed credentials directly in the Compose file (nor in environment variables generally) and instead use Docker Secrets. Forge supports a common Docker container pattern for retrieving settings from files instead of the environment by appending _FILE to the end of the environment variable name. See the Docker documentation for an example.

A limited example using secrets:

secrets:

semaphore_admin_pw:

file: semaphore_admin_password.txt

services:

semaphore:

restart: unless-stopped

ports:

- 3000:3000

image: semaphoreui/semaphore:latest

environment:

SEMAPHORE_ADMIN_PASSWORD_FILE: /run/secrets/semaphore_admin_pw

SEMAPHORE_ADMIN_NAME: admin

SEMAPHORE_ADMIN_EMAIL: admin@localhost

SEMAPHORE_ADMIN: admin

Run the following command to start Forge with configured database (MySQL or Postgres):

docker-compose up

Forge will be available via the following URL http://localhost:3000.

Installing Additional Python Dependencies

When the Forge container starts, it can automatically install additional Python packages that you may need for your Ansible playbooks. To use this feature:

- Create a

requirements.txtfile with your Python dependencies - Mount this file to the container at the path specified by

SEMAPHORE_CONFIG_PATH(defaults to/etc/semaphore)

Example update to your docker-compose.yml:

services:

semaphore:

restart: unless-stopped

ports:

- 3000:3000

image: semaphoreui/semaphore:latest

volumes:

- ./requirements.txt:/etc/semaphore/requirements.txt

During container startup, Forge will detect the requirements.txt file and automatically run pip3 install --upgrade -r ${SEMAPHORE_CONFIG_PATH}/requirements.txt to install the specified packages.

Binary file

Download the *.tar.gz for your platform from Releases page. Unpack it and setup Forge using the following commands:

{{#tabs }} {{#tab name="Linux (x64)" }}

download/v2.15.0/semaphore_2.15.0_linux_amd64.tar.gz

tar xf semaphore_2.15.0_linux_amd64.tar.gz

./semaphore setup

{{#endtab }}

{{#tab name="Linux (ARM64)" }}

wget https://github.com/semaphoreui/semaphore/releases/\

download/v2.15.0/semaphore_2.15.0_linux_arm64.tar.gz

tar xf semaphore_2.15.0_linux_arm64.tar.gz

./semaphore setup

{{#endtab }}

{{#tab name="Windows (x64)" }}

Invoke-WebRequest `

-Uri ("https://github.com/semaphoreui/semaphore/releases/" +

"download/v2.15.0/semaphore_2.15.0_windows_amd64.zip") `

-OutFile semaphore.zip

Expand-Archive -Path semaphore.zip -DestinationPath ./

./semaphore setup

{{#endtab }} {{#endtabs }}

Now you can run Forge:

./semaphore server --config=./config.json

Forge will be available via the following URL https://localhost:3000.

Run as a service

For more detailed information — look into the extended Systemd service documentation.

If you installed Forge via a package manager, or by downloading a binary file, you should create the Forge service manually.

Create the systemd service file:

/path/to/semaphore and /path/to/config.json to your semaphore and config file path.

sudo cat > /etc/systemd/system/semaphore.service <<EOF

[Unit]

Description=Forge

Documentation=https://github.com/semaphoreui/semaphore

Wants=network-online.target

After=network-online.target

[Service]

Type=simple

ExecReload=/bin/kill -HUP $MAINPID

ExecStart=/path/to/semaphore server --config=/path/to/config.json

SyslogIdentifier=semaphore

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF

Start the Forge service:

sudo systemctl daemon-reload

sudo systemctl start semaphore

Check the Forge service status:

sudo systemctl status semaphore

To make the Forge service auto start:

sudo systemctl enable semaphore

Kubernetes (Helm chart)

Forge provides a helm chart for installation on Kubernetes.

A thorough documentation can be found on artifacthub.io: Forge Helm Chart.

Cloud deployment

You can run Forge in any cloud environment using the same supported installation methods:

- Virtual machines: install via package manager or binary, and run behind a reverse proxy such as NGINX. Use a managed database (e.g., Amazon RDS, Cloud SQL) for reliability.

- Containers: deploy with Docker or Docker Compose on a VM or container service. See persistent volumes and environment configuration in the Docker guide.

- Kubernetes: deploy with the official Helm chart. Use cloud storage classes and managed databases.

Essentials:

- Configure external URL and TLS at your load balancer or reverse proxy.

- Store sensitive values (DB credentials, OAuth secrets) in a secure secret manager or Kubernetes Secrets.

- Use managed databases for production and enable regular backups.

- Put runners close to your workloads to reduce latency and egress.

Related guides:

Package manager

Download package file from Releases page.

*.deb for Debian and Ubuntu, *.rpm for CentOS and RedHat.

Here are several installation commands, depending on the package manager:

{{#tabs }}

{{#tab name="Debian / Ubuntu (x64)"}}

wget https://github.com/semaphoreui/semaphore/releases/\

download/v2.15.0/semaphore_2.15.0_linux_amd64.deb

sudo dpkg -i semaphore_2.15.0_linux_amd64.deb

{{#endtab }}

{{#tab name="Debian / Ubuntu (ARM64)" }}

wget https://github.com/semaphoreui/semaphore/releases/\

download/v2.15.0/semaphore_2.15.0_linux_arm64.deb

sudo dpkg -i semaphore_2.15.0_linux_arm64.deb

{{#endtab }}

{{#tab name="CentOS (x64)" }}

wget https://github.com/semaphoreui/semaphore/releases/\

download/v2.15.0/semaphore_2.15.0_linux_amd64.rpm

sudo yum install semaphore_2.15.0_linux_amd64.rpm

{{#endtab }}

{{#tab name="CentOS (ARM64)" }}

wget https://github.com/semaphoreui/semaphore/releases/\

download/v2.15.0/semaphore_2.15.0_linux_arm64.rpm

sudo yum install semaphore_2.15.0_linux_arm64.rpm

{{#endtab }}

{{#endtabs }}

Setup Forge by using the following command:

semaphore setup

Now you can run Forge:

semaphore server --config=./config.json

Forge will be available via this URL https://localhost:3000.

Snap (deprecated)

To install Forge via snap, run following command in terminal:

sudo snap install semaphore

Forge will be available by URL https://localhost:3000.

But to log in, you should create an admin user. Use the following commands:

sudo snap stop semaphore

sudo semaphore user add --admin \

--login john \

--name=John \

--email=john1996@gmail.com \

--password=12345

sudo snap start semaphore

You can check the status of the Forge service using the following command:

sudo snap services semaphore

It should print the following table:

Service Startup Current Notes

semaphore.semaphored enabled active -

After installation, you can set up Forge via Snap Configuration. Use the following command to see your Forge configuration:

sudo snap get semaphore

List of available options you can find in Configuration options reference.

Manually installing Forge

Content:

This documentation goes into the details on how to set-up Forge when using these installation methods:

The Forge software-package is just a part of the whole system needed to successfully run Ansible with it.

The Python3- and Ansible-Execution-Environment are also very important!

NOTE: There are existing Ansible-Galaxy Roles that handle this setup-logic for you or can be used as a base-template for your own Ansible Role!

Service User

Forge does not need to be run as user root - so you shouldn't.

Benefits of using a service user:

- Has its own user-config

- Has its own environment

- Processes easily identifiable

- Gained system security

You can create a system user either manually by using adduser or using the ansible.builtin.user module.

In this documentation we will assume:

- the service user creates is named

semaphore - it has the shell

/bin/bashset - its home directory is

/home/semaphore

Troubleshooting

If the Ansible execution of Forge is failing - you will need to troubleshoot it in the context of the service user.

You have multiple options to do so:

-

Change your whole shell session to be in the user's context:

sudo su --login semaphore -

Run a single command in the user's context:

sudo --login -u semaphore <command>

Python3

Ansible is build using the Python3 programming language.

So its clean setup is essential for Ansible to work correctly.

First - make sure the packages python3 and python3-pip are installed on your system!

You have multiple options to install required Python modules:

- Installing them in the service user's context

- Installing them in a service-specific Virtual Environment

Requirements

Either way - it is recommended to use a requirements.txt file to specify the modules that need to be installed.

We will assume the file /home/semaphore/requirements.txt is used.

Here is an example of its content:

ansible

# for common jinja-filters

netaddr

jmespath

# for common modules

pywinrm

passlib

requests

docker

NOTE: You should also update those requirements from time to time!

An option for doing this automatically is also shown in the service example below.

Modules in user context

Manually:

sudo --login -u semaphore python3 -m pip install --user --upgrade -r /home/semaphore/requirements.txt

Using Ansible:

- name: Install requirements

ansible.builtin.pip:

requirements: '/home/semaphore/requirements.txt'

extra_args: '--user --upgrade'

become_user: 'semaphore'

Modules in a virtualenv

We will assume the virtualenv is created at /home/semaphore/venv

Make sure the virtual environment is activated inside the Service! This is also shown in the service example below.

Manually:

sudo su --login semaphore

python3 -m pip install --user virtualenv

python3 -m venv /home/semaphore/venv

# activate the context of the virtual environment

source /home/semaphore/venv/bin/activate

# verify we are using python3 from inside the venv

which python3

> /home/semaphore/venv/bin/python3

python3 -m pip install --upgrade -r /home/semaphore/requirements.txt

# disable the context to the virtual environment

deactivate

Using Ansible:

- name: Create virtual environment and install requirements into it

ansible.builtin.pip:

requirements: '/home/semaphore/requirements.txt'

virtualenv: '/home/semaphore/venv'

state: present # or 'latest' to upgrade the requirements

Troubleshooting

If you encounter Python3 issues when using a virtual environment, you will need to change into its context to troubleshoot them:

sudo su --login semaphore

source /home/semaphore/venv/bin/activate

# verify we are using python3 from inside the venv

which python3

> /home/semaphore/venv/bin/python3

# troubleshooting

deactivate

Sometimes a virtual environment also breaks on system upgrades. If this happens you might just remove the existing one and re-create it.

Ansible Collections & Roles

You might want to pre-install Ansible modules and roles, so they don't need to be installed every time a task runs!

Requirements

It is recommended to use a requirements.yml file to specify the modules that need to be installed.

We will assume the file /home/semaphore/requirements.yml is used.

Here is an example of its content:

---

collections:

- 'namespace.collection'

# for common collections:

- 'community.general'

- 'ansible.posix'

- 'community.mysql'

- 'community.crypto'

roles:

- src: 'namespace.role'

See also: Installing Collections, Installing Roles

NOTE: You should also update those requirements from time to time!

An option for doing this automatically is also shown in the service example below.

Install in user-context

Manually:

sudo su --login semaphore

ansible-galaxy collection install --upgrade -r /home/semaphore/requirements.yml

ansible-galaxy role install --force -r /home/semaphore/requirements.yml

Install when using a virtualenv

Manually:

sudo su --login semaphore

source /home/semaphore/venv/bin/activate

# verify we are using python3 from inside the venv

which python3

> /home/semaphore/venv/bin/python3

ansible-galaxy collection install --upgrade -r /home/semaphore/requirements.yml

ansible-galaxy role install --force -r /home/semaphore/requirements.yml

deactivate

Reverse Proxy

See: Security - Encrypted connection

Extended Systemd Service

Here is the basic template of the systemd service.

Add additional settings under their [PART]

Base

[Unit]

Description=Forge

Documentation=https://docs.semaphoreui.com/

Wants=network-online.target

After=network-online.target

ConditionPathExists=/usr/bin/semaphore

ConditionPathExists=/etc/semaphore/config.json

[Service]

ExecStart=/usr/bin/semaphore server --config /etc/semaphore/config.json

ExecReload=/bin/kill -HUP $MAINPID

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

Service user

[Service]

User=semaphore

Group=semaphore

Python Modules

In user-context

[Service]

# to auto-upgrade python modules at service startup

ExecStartPre=/bin/bash -c 'python3 -m pip install --upgrade --user -r /home/semaphore/requirements.txt'

# so the executables are found

Environment="PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/home/semaphore/.local/bin"

# set the correct python path. You can get the correct path with: python3 -c "import site; print(site.USER_SITE)"

Environment="PYTHONPATH=/home/semaphore/.local/lib/python3.10/site-packages"

In virtualenv

[Service]

# to auto-upgrade python modules at service startup

ExecStartPre=/bin/bash -c 'source /home/semaphore/venv/bin/activate \

&& python3 -m pip install --upgrade -r /home/semaphore/requirements.txt'

# REPLACE THE EXISTING 'ExecStart'

ExecStart=/bin/bash -c 'source /home/semaphore/venv/bin/activate \

&& /usr/bin/semaphore server --config /etc/semaphore/config.json'

Ansible Collections & Roles

If using Python3 in user-context

[Service]

# to auto-upgrade ansible collections and roles at service startup

ExecStartPre=/bin/bash -c 'ansible-galaxy collection install --upgrade -r /home/semaphore/requirements.yml'

ExecStartPre=/bin/bash -c 'ansible-galaxy role install --force -r /home/semaphore/requirements.yml'

If using Python3 in virtualenv

# to auto-upgrade ansible collections and roles at service startup

ExecStartPre=/bin/bash -c 'source /home/semaphore/venv/bin/activate \

&& ansible-galaxy collection install --upgrade -r /home/semaphore/requirements.yml \

&& ansible-galaxy role install --force -r /home/semaphore/requirements.yml'

Other use-cases

Using local MariaDB

[Unit]

Requires=mariadb.service

Using local Nginx

[Unit]

Wants=nginx.service

Sending logs to syslog

[Service]

StandardOutput=journal

StandardError=journal

SyslogIdentifier=semaphore

Full Examples

Python Modules in user-context

[Unit]

Description=Forge

Documentation=https://docs.semaphoreui.com/

Wants=network-online.target

After=network-online.target

ConditionPathExists=/usr/bin/semaphore

ConditionPathExists=/etc/semaphore/config.json

[Service]

User=semaphore

Group=semaphore

Restart=always

RestartSec=10s

Environment="PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:~/.local/bin"

ExecStartPre=/bin/bash -c 'ansible-galaxy collection install --upgrade -r /home/semaphore/requirements.yml'

ExecStartPre=/bin/bash -c 'ansible-galaxy role install --force -r /home/semaphore/requirements.yml'

ExecStartPre=/bin/bash -c 'python3 -m pip install --upgrade --user -r /home/semaphore/requirements.txt'

ExecStart=/usr/bin/semaphore server --config /etc/semaphore/config.json

ExecReload=/bin/kill -HUP $MAINPID

[Install]

WantedBy=multi-user.target

Python Modules in virtualenv

[Unit]

Description=Forge

Documentation=https://docs.semaphoreui.com/

Wants=network-online.target

After=network-online.target

ConditionPathExists=/usr/bin/semaphore

ConditionPathExists=/etc/semaphore/config.json

[Service]

User=semaphore

Group=semaphore

Restart=always

RestartSec=10s

ExecStartPre=/bin/bash -c 'source /home/semaphore/venv/bin/activate \

&& python3 -m pip install --upgrade -r /home/semaphore/requirements.txt'

ExecStartPre=/bin/bash -c 'source /home/semaphore/venv/bin/activate \

&& ansible-galaxy collection install --upgrade -r /home/semaphore/requirements.yml \

&& ansible-galaxy role install --force -r /home/semaphore/requirements.yml'

ExecStart=/bin/bash -c 'source /home/semaphore/venv/bin/activate \

&& /usr/bin/semaphore server --config /etc/semaphore/config.json'

ExecReload=/bin/kill -HUP $MAINPID

[Install]

WantedBy=multi-user.target

Fixes

If you have a custom system language set - you might run into problems that can be resoled by updating the associated environmental variables:

[Service]

Environment=LANG="en_US.UTF-8"

Environment=LC_ALL="en_US.UTF-8"

Troubleshooting

If there is a problem while executing a task it might be an environmental issue with your setup - not an issue with Forge itself!

Please go through these steps to verify if the issue occurs outside Forge:

-

Change into the context of the user:

sudo su --login semaphore -

Change into the context of the virtualenv if you use one:

source /home/semaphore/venv/bin/activate # verify we are using python3 from inside the venv which python3 > /home/semaphore/venv/bin/python3 # troubleshooting deactivate -

Run the Ansible Playbook manually

- If it fails => there is an issue with your environment

- If it works:

- Re-check your configuration inside Forge

- It might be an issue with Forge

Configuration

Forge can be configured using several methods:

- Interactive setup — guided configuration when running Forge for the first time. It creates

config.json. - Configuration file — the primary and most flexible way to configure Forge.

- Environment variables — useful for containerized or cloud-native deployments.

- Snap configuration (deprecated) — legacy method used when installing via Snap packages.

Configuration options

Full list of available configuration options:

| Config file option / Environment variable | Description |

|---|---|

| Common | |

git_client FORGE_GIT_CLIENT | Type of Git client. Can be cmd_git or go_git. |

ssh_config_path FORGE_SSH_PATH | Path to SSH configuration file. |

port FORGE_PORT | TCP port on which the web interface will be available. Default: 3000 |

interface FORGE_INTERFACE | Useful if your server has multiple network interfaces |

tmp_path FORGE_TMP_PATH | Path to directory where cloned repositories and generated files are stored. Default: /tmp/semaphore |

max_parallel_tasks FORGE_MAX_PARALLEL_TASKS | Max number of parallel tasks that can be run on the server. |

max_task_duration_sec FORGE_MAX_TASK_DURATION_SEC | Max duration of a task in seconds. |

max_tasks_per_templateFORGE_MAX_TASKS_PER_TEMPLATE | Maximum number of recent tasks stored in the database for each template. |

schedule.timezone FORGE_SCHEDULE_TIMEZONE | Timezone used for scheduling tasks and cron jobs. |

oidc_providers  | OpenID provider settings. You can provide multiple OpenID providers. More about OpenID configuration read in OpenID. |

password_login_disable FORGE_PASSWORD_LOGIN_DISABLED  | Deny password login. |

non_admin_can_create_project FORGE_NON_ADMIN_CAN_CREATE_PROJECT | Allow non-admin users to create projects. |

env_vars FORGE_ENV_VARS | JSON map which contains environment variables. |

forwarded_env_vars FORGE_FORWARDED_ENV_VARS | JSON array of environment variables which will be forwarded from system. |

apps FORGE_APPS | JSON map which contains apps configuration. |

use_remote_runner FORGE_USE_REMOTE_RUNNER | |

runner_registration_token FORGE_RUNNER_REGISTRATION_TOKEN | |

| Database | |

sqlite.host FORGE_DB_HOST | Path to the SQLite database file. |

bolt.host FORGE_DB_HOST | Path to the BoltDB database file. |

mysql.host FORGE_DB_HOST | MySQL database host. |

mysql.name FORGE_DB_NAME | MySQL database (schema) name. |

mysql.user FORGE_DB_USER | MySQL user name. |

mysql.pass FORGE_DB_PASS | MySQL user's password. |

postgres.host FORGE_DB_HOST | Postgres database host. |

postgres.name FORGE_DB_NAME | Postgres database (schema) name. |

postgres.user FORGE_DB_USER | Postgres user name. |

postgres.pass FORGE_DB_PASS | Postgres user's password. |

dialect FORGE_DB_DIALECT | Can be sqlite (default), postgres, mysql or bolt (deprecated). |

*.options FORGE_DB_OPTIONS | JSON map which contains database connection options. |

| Security | |

access_key_encryption FORGE_ACCESS_KEY_ENCRYPTION | Secret key used for encrypting access keys in database. Read more in Database encryption reference. |

cookie_hash FORGE_COOKIE_HASH | Secret key used to sign cookies. |

cookie_encryption FORGE_COOKIE_ENCRYPTION | Secret key used to encrypt cookies. |

web_host FORGE_WEB_ROOT | Can be useful if you want to use Forge by the subpath, for example: http://yourdomain.com/semaphore. Do not add a trailing /. |

tls.enabled FORGE_TLS_ENABLED | Enable or disable TLS (HTTPS) for secure communication with the Forge server. |

tls.cert_file FORGE_TLS_CERT_FILE | Path to TLS certificate file. |

tls.key_file FORGE_TLS_KEY_FILE | Path to TLS key file. |

tls.http_redirect_port FORGE_TLS_HTTP_REDIRECT_PORT | Port to redirect HTTP traffic to HTTPS. |

auth.totp.enabled FORGE_TOTP_ENABLED | Enable Two-factor authentication with using TOTP. |

auth.totp.allow_recovery FORGE_TOTP_ALLOW_RECOVERY | Allow users to reset TOTP using a recovery code. |

| Process | |

process.user FORGE_PROCESS_USER | User under which wrapped processes (such as Ansible, Terraform, or OpenTofu) will run. |

process.uid FORGE_PROCESS_UID | ID of user under which wrapped processes (such as Ansible, Terraform, or OpenTofu) will run. |

process.gid FORGE_PROCESS_GID | ID for group under which wrapped processes (such as Ansible, Terraform, or OpenTofu) will run. |

process.chroot FORGE_PROCESS_CHROOT | Chroot directory for wrapped processes. |

email_sender FORGE_EMAIL_SENDER | Email address of the sender. |

email_host FORGE_EMAIL_HOST | SMTP server hostname. |

email_port FORGE_EMAIL_PORT | SMTP server port. |

email_secure FORGE_EMAIL_SECURE | Enable StartTLS to upgrade an unencrypted SMTP connection to a secure, encrypted one. |

email_tls FORGE_EMAIL_TLS | Use SSL or TLS connection for communication with the SMTP server. |

email_tls_min_version FORGE_EMAIL_TLS_MIN_VERSION | Minimum TLS version to use for the connection. |

email_username FORGE_EMAIL_USERNAME | Username for SMTP server authentication. |

email_password FORGE_EMAIL_PASSWORD | Password for SMTP server authentication. |

email_alert FORGE_EMAIL_ALERT | Flag which enables email alerts. |

| Messengers | |

telegram_alert FORGE_TELEGRAM_ALERT | Set to True to enable pushing alerts to Telegram. It should be used in combination with telegram_chat and telegram_token. |

telegram_chat FORGE_TELEGRAM_CHAT | Set to the Chat ID for the chat to send alerts to. Read more in Telegram Notifications Setup |

telegram_token FORGE_TELEGRAM_TOKEN | Set to the Authorization Token for the bot that will receive the alert payload. Read more in Telegram Notifications Setup |

slack_alert FORGE_SLACK_ALERT | Set to True to enable pushing alerts to slack. It should be used in combination with slack_url |

slack_url FORGE_SLACK_URL | The slack webhook url. Forge will used it to POST Slack formatted json alerts to the provided url. |

microsoft_teams_alert FORGE_MICROSOFT_TEAMS_ALERT | Flag which enables Microsoft Teams alerts. |

microsoft_teams_url FORGE_MICROSOFT_TEAMS_URL | Microsoft Teams webhook URL. |

rocketchat_alert FORGE_ROCKETCHAT_ALERT | Set to True to enable pushing alerts to Rocket.Chat. It should be used in combination with rocketchat_url. Available since v2.9.56. |

rocketchat_url FORGE_ROCKETCHAT_URL | The rocketchat webhook url. Forge will used it to POST Rocket.Chat formatted json alerts to the provided url. Available since v2.9.56. |

dingtalk_alert FORGE_DINGTALK_ALERT | Enable Dingtalk alerts. |

dingtalk_url FORGE_DINGTALK_URL | Dingtalk messenger webhook URL. |

gotify_alert FORGE_GOTIFY_ALERT | Enable Gotify alerts. |

gotify_url FORGE_GOTIFY_URL | Gotify server URL. |

gotify_token FORGE_GOTIFY_TOKEN | Gotify server token. |

| LDAP | |

ldap_enable FORGE_LDAP_ENABLE | Flag which enables LDAP authentication. |

ldap_needtls FORGE_LDAP_NEEDTLS | Flag to enable or disable TLS for LDAP connections. |

ldap_binddn FORGE_LDAP_BIND_DN | The distinguished name (DN) used to bind to the LDAP server for authentication. |

ldap_bindpassword FORGE_LDAP_BIND_PASSWORD | The password used to bind to the LDAP server for authentication. |

ldap_server FORGE_LDAP_SERVER | The hostname and port of the LDAP server (e.g., ldap-server.com:1389). |

ldap_searchdn FORGE_LDAP_SEARCH_DN | The base distinguished name (DN) used for searching users in the LDAP directory (e.g., dc=example,dc=org). |

ldap_searchfilter FORGE_LDAP_SEARCH_FILTER | The filter used to search for users in the LDAP directory (e.g., (&(objectClass=inetOrgPerson)(uid=%s))). |

ldap_mappings.dn FORGE_LDAP_MAPPING_DN | LDAP attribute to use as the distinguished name (DN) mapping for user authentication. |

ldap_mappings.mail FORGE_LDAP_MAPPING_MAIL | LDAP attribute to use as the email address mapping for user authentication. |

ldap_mappings.uid FORGE_LDAP_MAPPING_UID | LDAP attribute to use as the user ID (UID) mapping for user authentication. |

ldap_mappings.cn FORGE_LDAP_MAPPING_CN | LDAP attribute to use as the common name (CN) mapping for user authentication. |

| Logging | |

log.events.format FORGE_EVENT_LOG_FORMAT | Event log format. Can be json or empty for text. |

log.events.enabled FORGE_EVENT_LOG_ENABLED | Enable or disable event logging. |

log.events.logger FORGE_EVENT_LOGGER | JSON map which contains event logger configuration. |

log.tasks.format FORGE_TASK_LOG_FORMAT | Task log format. Can be json or empty for text. |

log.tasks.enabled FORGE_TASK_LOG_ENABLED | Enable or disable task logging. |

log.tasks.logger FORGE_TASK_LOGGER | JSON map which contains task logger configuration. |

log.tasks.result_logger FORGE_TASK_RESULT_LOGGER | JSON map which contains task result logger configuration. |

Frequently asked questions

1. How to configure a public URL for Forge

If you use nginx or other web server before Forge, you should provide configuration option web_host.

For example you configured NGINX on the server which proxies queries to Forge.

Server address https://example.com and you proxies all queries https://example.com/semaphore to Forge.

Your web_host will be https://example.com/semaphore.

Configuration file

Creating configuration file

Forge uses a config.json file for its core configuration. You can generate this file interactively using built-in tools or through a web-based configurator.

Generate via CLI

Use the following commands to generate the configuration file interactively:

-

For the Forge server:

semaphore setup -

For the Forge runner:

semaphore runner setupFor more details about runner configuration, see the Runners section.

Generate via Web

Alternatively, you can use the web-based interactive configurator:

Configuration file example

Forge uses a config.json configuration file with following content:

{

"mysql_test": {

"host": "127.0.0.1:3306",

"user": "root",

"pass": "***",

"name": "semaphore"

},

"dialect": "mysql",

"git_client": "go_git",

"auth": {

"totp": {

"enabled": false,

"allow_recovery": true

}

},

"use_remote_runner": true,

"runner_registration_token": "73fs***",

"tmp_path": "/tmp/semaphore",

"cookie_hash": "96Nt***",

"cookie_encryption": "x0bs***",

"access_key_encryption": "j1ia***",

"max_tasks_per_template": 3,

"schedule": {

"timezone": "UTC"

},

"log": {

"events": {

"enabled": true,

"path": "./events.log"

}

},

"process": {

"chroot": "/opt/semaphore/sandbox"

}

}

Configuration file usage

- For Forge server:

semaphore server --config ./config.json

- For Forge runner:

semaphore runner start --config ./config.json

Environment variables

With using environment variables you can override any available configuration option.

You can use interactive evnvironment variables generator (for Docker):

Application environment for apps (Ansible, Terraform, etc.)

Forge can pass environment variables to application processes (Ansible, Terraform/OpenTofu, Python, PowerShell, etc.). There are two related options:

env_vars/SEMAPHORE_ENV_VARS: static key-value pairs that will be set for app processes.forwarded_env_vars/SEMAPHORE_FORWARDED_ENV_VARS: a list of variable names the server will forward from its own process environment.

Example configuration file:

{

"env_vars": {

"HTTP_PROXY": "http://proxy.internal:3128",

"ANSIBLE_STDOUT_CALLBACK": "yaml"

},

"forwarded_env_vars": [

"AWS_ACCESS_KEY_ID",

"AWS_SECRET_ACCESS_KEY",

"GOOGLE_APPLICATION_CREDENTIALS"

]

}

Equivalent with environment variables:

export SEMAPHORE_ENV_VARS='{"HTTP_PROXY":"http://proxy.internal:3128","ANSIBLE_STDOUT_CALLBACK":"yaml"}'

export SEMAPHORE_FORWARDED_ENV_VARS='["AWS_ACCESS_KEY_ID","AWS_SECRET_ACCESS_KEY","GOOGLE_APPLICATION_CREDENTIALS"]'

Notes:

- Forwarding is explicit: only variables listed in

forwarded_env_varsare inherited by app processes. - Secrets should be provided securely (for example via Docker/Kubernetes secrets) and then forwarded using

forwarded_env_vars.

Secret environment variables in Variable Groups

In addition to global environment variables, you can define per-project secrets in Variable Groups. Secret keys are masked in the UI and logs. See User Guide → Variable Groups for usage and Terraform integration with TF_VAR_* variables.

Interactive setup

Use this option for first time configuration (not working for Forge installed via Snap).

forge setup

🔐 Security

Introduction

Security is a top priority in Forge. Whether you're automating critical infrastructure tasks or managing team access to sensitive systems, Forge is designed to provide robust, secure operations out of the box. This section outlines how Forge handles security and what you should consider when deploying it in production.

Authentication & authorization

Forge supports secure authentication and flexible authorization mechanisms:

-

Login methods:

-

Username/password

Default method using credentials stored in the Forge database. Passwords are hashed using a strong algorithm (bcrypt). -

LDAP

Allows integration with enterprise directory services. Supports user/group filtering and secure connections via LDAPS. -

OpenID Connect (OIDC)

Enables single sign-on with identity providers like Google, Azure AD, or Keycloak. Supports custom claims and group mappings.

-

-

Two-Factor authentication (2FA)

TOTP-based 2FA is available and recommended for all users. It can be enabled per user and supports optional recovery codes. See configuration optionsauth.totp.enabledandauth.totp.allow_recovery. -

Role-based access control

You can assign different roles to users such as Admin, Maintainer, or Viewer, limiting access based on responsibility. -

Session management

Sessions are protected with secure HTTP cookies. Session expiration and logout mechanisms ensure minimal exposure.

Secrets & credentials

Managing secrets securely is a core feature:

-

Encrypted key store

Credentials and secret variables are encrypted at rest using AES encryption. -

Environment isolation

Secrets are only passed to jobs at runtime and are not exposed to the container environment directly. -

SSH keys and tokens

Users are responsible for uploading valid SSH keys and tokens. These are encrypted and only used when running tasks. -

HashiCorp Vault integration (Pro)

Secrets can be stored in an external Vault instance. Choose storage per-secret when creating or editing a secret.

Running untrusted code / playbooks

Forge runs user-defined playbooks and commands, which can be risky:

-

Container isolation

Tasks are executed in isolated Docker containers. These containers have no access to the host system. -

Least privilege

Containers run with minimal permissions and can be restricted further using Docker flags. -

Chroot execution

Forge can execute tasks inside a chroot jail to further isolate the execution environment from the host system. -

Task process user

Tasks can be executed under a dedicated non-root system user (e.g.,forge) to reduce the impact of potential exploits. This is optional and can be configured based on system policies.

Secure Deployment

To ensure Forge is securely deployed:

-

Use HTTPS

Forge supports HTTPS both via its built-in TLS support and through a reverse proxy like Nginx. It is strongly recommended to enable HTTPS in production.To enable built-in HTTPS support add following block to config.json:

{ ... "tls": { "enabled": true, "cert_file": "/path/to/cert/example.com.cert", "key_file": "/path/to/key/example.com.key" } ... } -

Run behind a firewall

Limit access to the Forge and database to only trusted IPs. -

Database security

Use strong passwords and restrict database access to Forge only.

Updates & patch management

Security updates are published regularly:

-

Stay updated

Always use the latest stable release. -

Changelog

Review changes on GitHub before updating. -

Automatic updates

If using Docker, consider automation pipelines for regular updates.

Reporting Vulnerabilities

Found a vulnerability? Help us keep Forge secure:

- Responsible disclosure

Please email us atsecurity@forge.com.

Vulnerability resolution targets

We aim to resolve reported vulnerabilities within the following target windows:

- Critical: within 30 days

- High: within 60 days

- Medium: within 90 days

- Low: best effort, typically within 180 days

Out-of-cycle patches may be released for actively exploited issues affecting latest stable releases.

Code security tooling

We use CodeQL, Codacy, Snyk and Renovate to analyze the codebase and dependencies, and to automate dependency updates.

-

No public exploits

Do not share vulnerabilities publicly until patched. -

Acknowledgments

Security researchers may be acknowledged in release notes if desired.

Database security

Data encryption

Sensitive data is stored in the database, in an encrypted form. You should set the configuration option access_key_encryption in configuration file to enable Access Keys encryption. It must be generated by command:

head -c32 /dev/urandom | base64Network security

For security reasons, Forge should not be used over unencrypted HTTP!

Why use encrypted connections? See: Article from Cloudflare.

Options you have:

VPN

You can use a Client-to-Site VPN, that terminates on the Forge server, to encrypt & secure the connection.

SSL

Forge supports SSL/TLS starting from v2.12.

config.json:

{

...

"tls": {

"enabled": true,

"cert_file": "/path/to/cert/example.com.cert",

"key_file": "/path/to/key/example.com.key"

}

...

}

Or environment varibles (useful for Docker):

export SEMAPHORE_TLS_ENABLED=True

export SEMAPHORE_TLS_CERT_FILE=/path/to/cert/example.com.cert

export SEMAPHORE_TLS_KEY_FILE=/path/to/key/example.com.key

Alternatively, you can use a reverse proxy in front of Forge to handle secure connections. For example:

Self-signed SSL certificate

You can generate your own SSL certificate with using openssl CLI tool:

openssl req -x509 -newkey rsa:4096 \

-keyout key.pem -out cert.pem \

-sha256 -days 3650 -nodes \

-subj "/C=US/ST=California/L=San Francisco/O=CompanyName/OU=DevOps/CN=example.com"

Let's Encrypt SSL certificate

You can use Certbot to generate and automatically renew a Let's Encrypt SSL certificate.

Example for Apache:

sudo snap install certbot

sudo certbot --apache -n --agree-tos -d example.com -m mail@example.com

Others

If you want to use any other reverse proxy - make sure to also forward websocket connections on the /api/ws route!

Nginx config

Configuration example:

server {

listen 443 ssl;

server_name example.com;

# add Strict-Transport-Security to prevent man in the middle attacks

add_header Strict-Transport-Security "max-age=31536000" always;

# SSL

ssl_certificate /etc/nginx/cert/cert.pem;

ssl_certificate_key /etc/nginx/cert/privkey.pem;

# Recommendations from

# https://raymii.org/s/tutorials/Strong_SSL_Security_On_nginx.html

ssl_protocols TLSv1.1 TLSv1.2;

ssl_ciphers 'EECDH+AESGCM:EDH+AESGCM:AES256+EECDH:AES256+EDH';

ssl_prefer_server_ciphers on;

ssl_session_cache shared:SSL:10m;

# required to avoid HTTP 411: see Issue #1486

# (https://github.com/docker/docker/issues/1486)

chunked_transfer_encoding on;

location / {

proxy_pass http://127.0.0.1:3000/;

proxy_set_header Host $http_host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

proxy_buffering off;

proxy_request_buffering off;

}

location /api/ws {

proxy_pass http://127.0.0.1:3000/api/ws;

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection "upgrade";

proxy_set_header Origin "";

}

}

Apache config

Make sure you have enabled following Apache modules:

sudo a2enmod proxy

sudo a2enmod proxy_http

sudo a2enmod proxy_wstunnel

Add following virtual host to your Apache configuration:

<VirtualHost *:443>

ServerName example.com

ServerAdmin webmaster@localhost

SSLEngine on

SSLCertificateFile /path/to/example.com.crt

SSLCertificateKeyFile /path/to/example.com.key

ProxyPreserveHost On

<Location />

ProxyPass http://127.0.0.1:3000/

ProxyPassReverse http://127.0.0.1:3000/

</Location>

<Location /api/ws>

RewriteCond %{HTTP:Connection} Upgrade [NC]

RewriteCond %{HTTP:Upgrade} websocket [NC]

ProxyPass ws://127.0.0.1:3000/api/ws/

ProxyPassReverse ws://127.0.0.1:3000/api/ws/

</Location>

</VirtualHost>

LDAP configuration

Configuration file contains the following LDAP parameters:

{

"ldap_binddn": "cn=admin,dc=example,dc=org",

"ldap_bindpassword": "admin_password",

"ldap_server": "localhost:389",

"ldap_searchdn": "ou=users,dc=example,dc=org",

"ldap_searchfilter": "(&(objectClass=inetOrgPerson)(uid=%s))",

"ldap_mappings": {

"dn": "",

"mail": "uid",

"uid": "uid",

"cn": "cn"

},

"ldap_enable": true,

"ldap_needtls": false,

}

All SSO provider options:

| Parameter | Environment Variables | Description |

|---|---|---|

ldap_binddn | SEMAPHORE_LDAP_BIND_DN | Name of LDAP user object to bind. |

ldap_bindpassword | SEMAPHORE_LDAP_BIND_PASSWORD | Password of LDAP user defined in Bind DN. |

ldap_server | SEMAPHORE_LDAP_SERVER | LDAP server host including port. For example: localhost:389. |

ldap_searchdn | SEMAPHORE_LDAP_SEARCH_DN | Scope where users will be searched. For example: ou=users,dc=example,dc=org. |

ldap_searchfilter | SEMAPHORE_LDAP_SEARCH_FILTER | Users search expression. Default: (&(objectClass=inetOrgPerson)(uid=%s)), where %s will replaced to entered login. |

ldap_mappings.dn | SEMAPHORE_LDAP_MAPPING_DN | |

ldap_mappings.mail | SEMAPHORE_LDAP_MAPPING_MAIL | User email claim expression*. |

ldap_mappings.uid | SEMAPHORE_LDAP_MAPPING_UID | User login claim expression*. |

ldap_mappings.cn | SEMAPHORE_LDAP_MAPPING_CN | User name claim expression*. |

ldap_enable | SEMAPHORE_LDAP_ENABLE | LDAP enabled. |

ldap_needtls | SEMAPHORE_LDAP_NEEDTLS | Connect to LDAP server by SSL. |

*Claim expression

Example of claim expression:

email | {{ .username }}@your-domain.com

Forge is attempting to claim the email field first. If it is empty, the expression following it is executed.

"username_claim": "|" generates a random username for each user who logs in through the provider.

Troubleshooting

Use ldapwhoami tool to check if your BindDN works:

This tool is provided by the openldap-clients package.

ldapwhoami\

-H ldap://ldap.com:389\

-D "CN=your_ldap_binddn_value_in_config"\

-x\

-W

It will ask interactively for the password, and should return code 0 and echo out the DN as specified.

Example: Using OpenLDAP Server

Run the following command to start your own LDAP server with an admin account and an additional user:

docker run -d --name openldap \

-p 1389:1389 \

-p 1636:1636 \

-e LDAP_ADMIN_USERNAME=admin \

-e LDAP_ADMIN_PASSWORD=pwd \

-e LDAP_USERS=user1 \

-e LDAP_PASSWORDS=pwd \

-e LDAP_ROOT=dc=example,dc=org \

-e LDAP_ADMIN_DN=cn=admin,dc=example,dc=org \

bitnami/openldap:latest

Your LDAP configuration for Forge should be as follows:

{

"ldap_binddn": "cn=admin,dc=example,dc=org",

"ldap_bindpassword": "pwd",

"ldap_server": "ldap-server.com:1389",

"ldap_searchdn": "dc=example,dc=org",

"ldap_searchfilter": "(&(objectClass=inetOrgPerson)(uid=%s))",

"ldap_mappings": {

"mail": "{{ .cn }}@ldap.your-domain.com",

"uid": "|",

"cn": "cn"

},

"ldap_enable": true,

"ldap_needtls": false

}

To run Forge in Docker, use the following LDAP configuration:

docker run -d -p 3000:3000 --name semaphore \

-e SEMAPHORE_DB_DIALECT=bolt \

-e SEMAPHORE_ADMIN=admin \

-e SEMAPHORE_ADMIN_PASSWORD=changeme \

-e SEMAPHORE_ADMIN_NAME=Admin \

-e SEMAPHORE_ADMIN_EMAIL=admin@localhost \

-e SEMAPHORE_LDAP_ENABLE=yes \

-e SEMAPHORE_LDAP_SERVER=ldap-server.com:1389 \

-e SEMAPHORE_LDAP_BIND_DN=cn=admin,dc=example,dc=org \

-e SEMAPHORE_LDAP_BIND_PASSWORD=pwd \

-e SEMAPHORE_LDAP_SEARCH_DN=dc=example,dc=org \

-e 'SEMAPHORE_LDAP_SEARCH_FILTER=(&(objectClass=inetOrgPerson)(uid=%s))' \

-e 'SEMAPHORE_LDAP_MAPPING_MAIL={{ .cn }}@ldap.your-domain.com' \

-e 'SEMAPHORE_LDAP_MAPPING_UID=|' \

-e 'SEMAPHORE_LDAP_MAPPING_CN=cn' \

semaphoreui/semaphore:latest

OpenID

Forge supports authentication via OpenID Connect (OIDC).

Links:

- GitHub config

- Google config

- GitLab config

- Authelia config

- Authentik config

- Keycloak config

- Okta config

- Azure config

- Zitadel config

Example of SSO provider configuration:

{

"oidc_providers": {

"mysso": {

"display_name": "Sign in with MySSO",

"color": "orange",

"icon": "login",

"provider_url": "https://mysso-provider.com",

"client_id": "***",

"client_secret": "***",

"redirect_url": "https://your-domain.com/api/auth/oidc/mysso/redirect"

}

}

}

Configure via environment variable

When running in containers it may be convenient to configure providers using a single environment variable:

SEMAPHORE_OIDC_PROVIDERS='{

"github": {

"client_id": "***",

"client_secret": "***"

}

}'

This value must be a valid JSON string matching the oidc_providers structure above.

All SSO provider options:

| Parameter | Description |

|---|---|

display_name | Provider name which displayed on Login screen. |

icon | MDI-icon which displayed before of provider name on Login screen. |

color | Provider name which displayed on Login screen. |

client_id | Provider client ID. |

client_id_file | The path to the file where the provider's client ID is stored. Has less priorty then client_id. |

client_secret | Provider client Secret. |

client_secret_file | The path to the file where the provider's client secret is stored. Has less priorty then client_secret. |

redirect_url | |

provider_url | |

scopes | |

username_claim | Username claim expression*. |

email_claim | Email claim expression*. |

name_claim | Profile Name claim expression*. |

order | Position of the provider button on the Sign in screen. |

endpoint.issuer | |

endpoint.auth | |

endpoint.token | |

endpoint.userinfo | |

endpoint.jwks | |

endpoint.algorithms |

*Claim expression

Example of claim expression:

email | {{ .username }}@your-domain.com

Forge is attempting to claim the email field first. If it is empty, the expression following it is executed.

"username_claim": "|" generates a random username for each user who logs in through the provider.

Sign in screen

For each of the configured providers, an additional login button is added to the login page:

GitHub config

config.json:

{

"oidc_providers": {

"github": {

"icon": "github",

"display_name": "Sign in with GitHub",

"client_id": "***",

"client_secret": "***",

"redirect_url": "https://your-domain.com/api/auth/oidc/github/redirect",

"endpoint": {

"auth": "https://github.com/login/oauth/authorize",

"token": "https://github.com/login/oauth/access_token",

"userinfo": "https://api.github.com/user"

},

"scopes": ["read:user", "user:email"],

"username_claim": "|",

"email_claim": "email | {{ .id }}@github.your-domain.com",

"name_claim": "name",

"order": 1

}

}

}

Google config

config.json:

{

"oidc_providers": {

"google": {

"color": "blue",

"icon": "google",

"display_name": "Sign in with Google",

"provider_url": "https://accounts.google.com",

"client_id": "***.apps.googleusercontent.com",

"client_secret": "GOCSPX-***",

"redirect_url": "https://your-domain.com/api/auth/oidc/google/redirect",

"username_claim": "|",

"name_claim": "name",

"order": 2

}

}

}

GitLab config

config.json:

{

"oidc_providers": {

"gitlab": {

"display_name": "Sign in with GitLab",

"color": "orange",

"icon": "gitlab",

"provider_url": "https://gitlab.com",

"client_id": "***",

"client_secret": "gloas-***",

"redirect_url": "https://your-domain.com/api/auth/oidc/gitlab/redirect",

"username_claim": "|",

"order": 3

}

}

}

Tutorial in Forge blog: GitLab authentication in Forge.

Gitea config

config.json:

"oidc_providers": {

"github": {

"icon": "github",

"display_name": "Sign in with gitea instance",

"client_id": "123-456-789",

"client_secret": "**********",

"redirect_url": "https://your-semaphore.tld/api/auth/oidc/github/redirect",

"endpoint": {

"auth": "https://your-gitea.tld/login/oauth/authorize",

"token": "https://your-gitea.tld/login/oauth/access_token",

"userinfo": "https://your-gitea.tld/api/v1/user"

},

"scopes": ["read:user", "user:email"],

"username_claim": "login",

"email_claim": "email",

"name_claim": "full_name",

"order": 1

}

}

In your gitea instance, go to https://your-gitea.tld/user/settings/applications and create a new oauth2 application.

As redirect URI use https://your-semaphore.tld/api/auth/oidc/github/redirect.

Authentication works fine. But "Name" and "Username" does not recieved correctly. The username will be a unique ID in semaphore and the name will be set to "Anonymous", which is changeable by the user itself. The emails is mapped correctly.

Authelia config

Authelia config.yaml:

identity_providers:

oidc:

claims_policies:

semaphore_claims_policy:

id_token:

- groups

- email

- email_verified

- alt_emails

- preferred_username

- name

clients:

- client_id: semaphore

client_name: Forge

client_secret: 'your_secret'

claims_policy: semaphore_claims_policy

public: false

authorization_policy: two_factor

redirect_uris:

- https://your-semaphore-domain.com/api/auth/oidc/authelia/redirect

scopes:

- openid

- profile

- email

userinfo_signed_response_alg: none

Forge config.json:

"oidc_providers": {

"authelia": {

"display_name": "Authelia",

"provider_url": "https://your-authelia-domain.com",

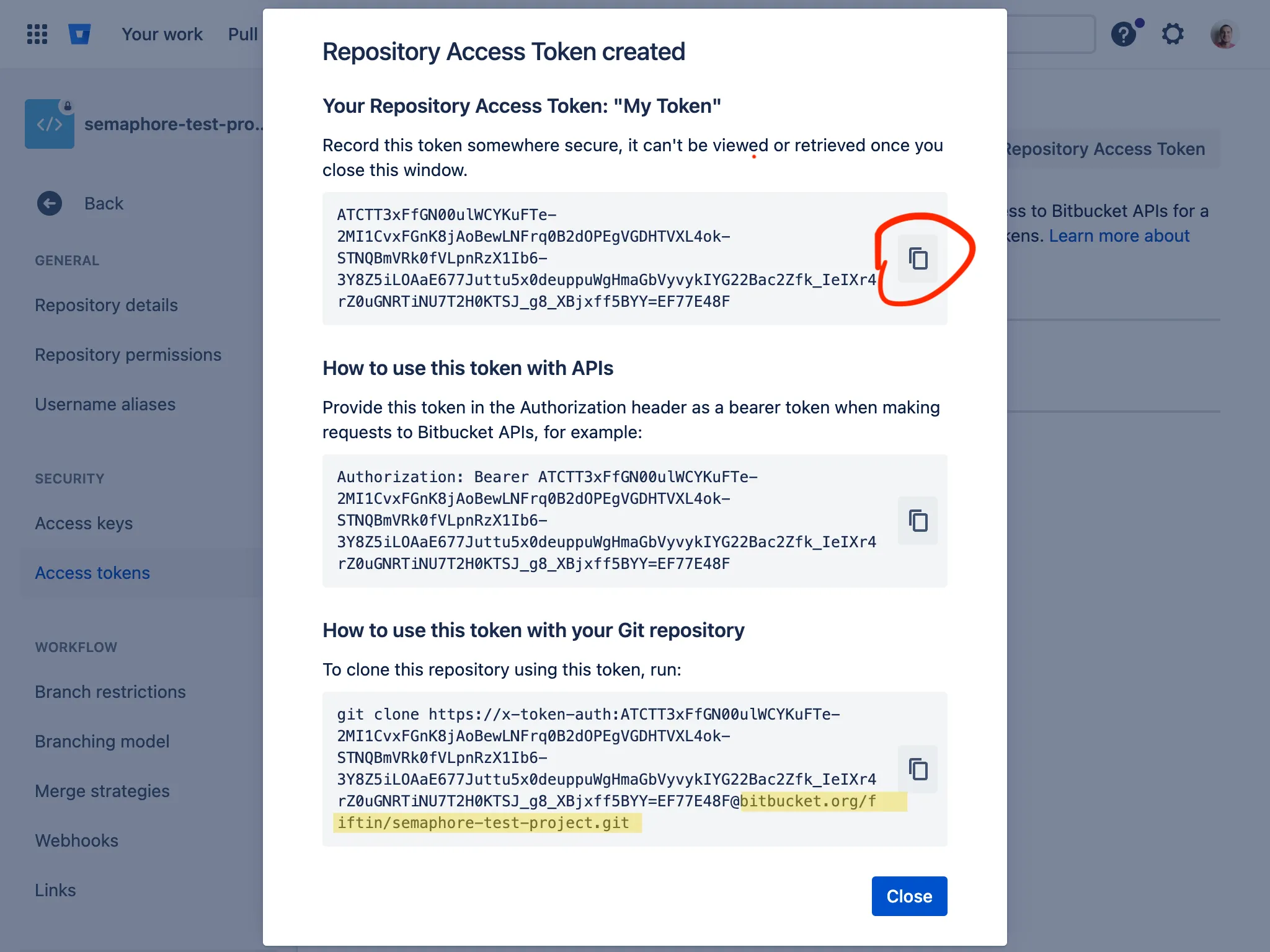

"client_id": "semaphore",